Atomic Data Overview

Atomic Data is a modular specification for sharing, modifying and modeling graph data. It combines the ease of use of JSON, the connectivity of RDF (linked data) and the reliability of type-safety.

Atomic Data uses links to connect pieces of data, and therefore makes it easier to connect datasets to each other - even when these datasets exist on separate machines.

AtomicServer

AtomicServer is an open source, powerful graph database + headless CMS. It’s the reference implementation for the Atomic Data specification, written in Rust.

Atomic Data Core

Atomic Data has been designed with the following goals in mind:

- Give people more control over their data

- Make linked data easier to use

- Make it easier for developers to build highly interoperable apps

- Make standardization easier and cheaper

Atomic Data is Linked Data, as it is a strict subset of RDF.

It is type-safe (you know if something is a string, number, date, URL, etc.) and extensible through Atomic Schema, which means that you can re-use or define your own Classes, Properties and Datatypes.

The default serialization format for Atomic Data is JSON-AD, which is simply JSON where each key is a URL of an Atomic Property.

These Properties are responsible for setting the datatype (to ensure type-safety) and setting shortnames (which help to keep names short, for example in JSON serialization) and descriptions (which provide semantic explanations of what a property should be used for).

Read more about Atomic Data Core

Atomic Data Extended

Atomic Data Extended is a set of extra modules (on top of Atomic Data Core) that deal with data that changes over time, authentication, and authorization.

- Commits communicate state changes. These Commits are signed using cryptographic keys, which ensures that every change can be audited. Commits are also used to construct a history of versions.

- Agents are Users that enable authentication. They are Resources with their own Public and Private keys, which they use to identify themselves.

- Collections: querying, filtering, sorting and pagination.

- Paths: traverse graphs.

- Hierarchies used for authorization and keeping data organized. Similar to folder structures on file-systems.

- Invites: create new users and provide them with rights.

- WebSockets: real-time updates.

- Endpoints: provide machine-readable descriptions of web services.

- Files: upload, download and metadata for files.

Tools & libraries

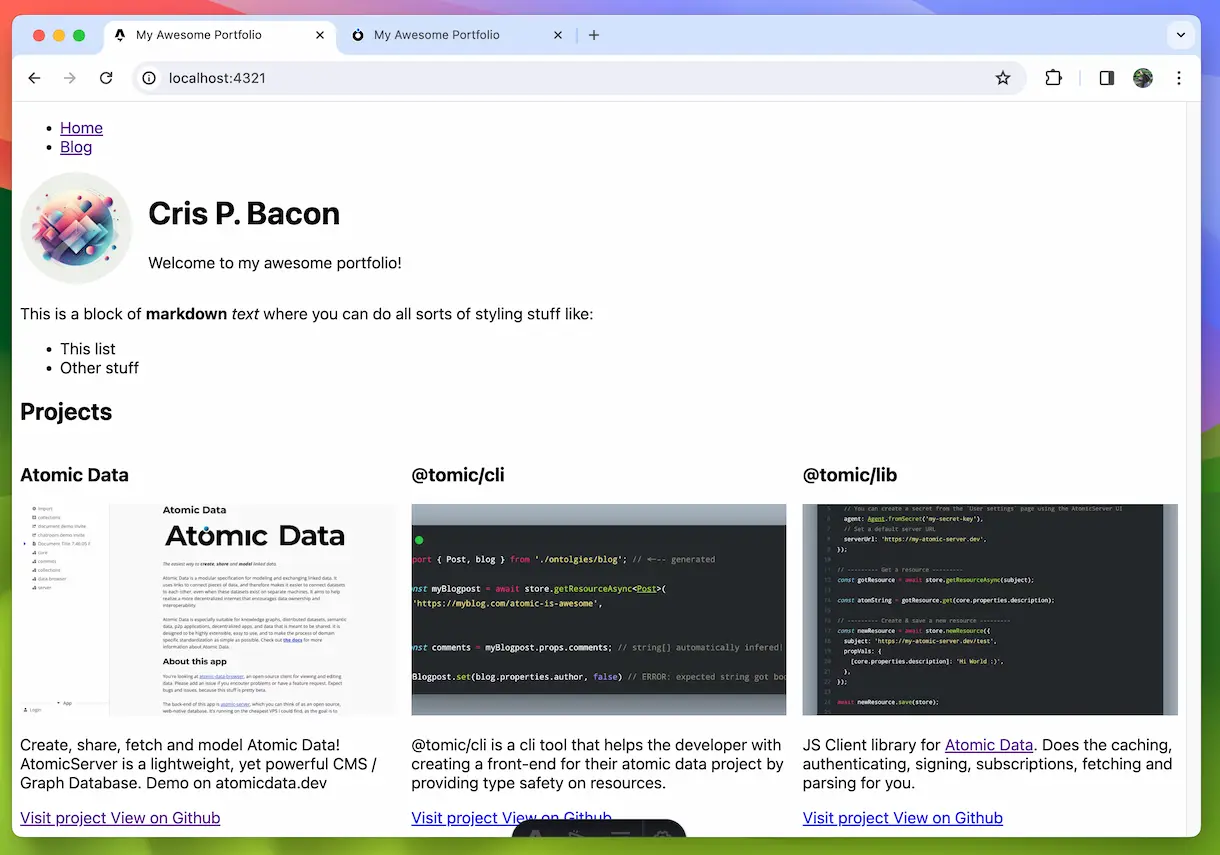

- Browser app atomic-data-browser (demo on atomicdata.dev)

- Build a react app using typescript & react libraries. Start with the react template on codesandbox

- Host your own atomic-server (powers atomicdata.dev, run with

docker run -p 80:80 -v atomic-storage:/atomic-storage joepmeneer/atomic-server) - Discover the command line tool: atomic-cli (

cargo install atomic-cli) - Use the Rust library: atomic-lib

Get involved

Make sure to join our Discord if you’d like to discuss Atomic Data with others.

Status

Keep in mind that none of the Atomic Data projects has reached a v1, which means that breaking changes can happen.

Reading these docs

This is written mostly as a book, so reading it in the order of the Table of Contents will probably give you the best experience. That being said, feel free to jump around - links are often used to refer to earlier discussed concepts. If you encounter any issues while reading, please leave an issue on Github. Use the arrows on the side / bottom to go to the next page.

Table of contents

What is Atomic Data

AtomicServer

Guides

Specification

Use Atomic Data

Acknowledgements | Newsletter | Get involved

Motivation: Why Atomic Data?

Give people more control over their data

The world wide web was designed by Tim Berners-Lee to be a decentralized network of servers that help people share information. As I’m writing this, it is exactly 30 years ago that the first website has launched. Unfortunately, the web today is not the decentralized network it was supposed to be. A handful of large tech companies are in control of how the internet is evolving, and where and how our data is being stored. The various services that companies like Google and Microsoft offer (often for free) integrate really well with their other services, but are mostly designed to lock you in. Vendor lock-in means that it is often difficult to take your information from one app to another. This limits innovation, and limits users to decide how they want to interact with their data. Companies often have incentives that are not fully aligned with what users want. For example, Facebook sorts your newsfeed not to make you satisfied, but to make you spend as much time looking at ads. They don’t want you to be able to control your own newsfeed. Even companies like Apple, that don’t have an ad-revenue model, still have a reason to (and very much do) lock you in. To make things even worse, even open-source projects made by volunteers often don’t work well together. That’s not because of bad intentions, that’s because it is hard to make things interoperable.

If we want to change this, we need open tech that works really well together. And if we want that, we need to standardize. The existing standards are well-suited for documents and webpages, but not for structured personal data. If we want to have that, we need to standardize the read-write web, which includes standardizing how items are changed, how their types are checked, how we query lists, and more. I want all people to have a (virtual) private server that contains their own data, that they control. This Personal Data Store could very well be an old smartphone with a broken screen that is always on, running next to your router.

Atomic Data is designed to be a standard that achieves this. But we need more than a standard to get adoption - we need implementations. That’s why I’ve been working on a server, various libraries, a GUI and more - all MIT licensed. If Atomic Data will be successful, there will likely be other, better implementations.

Linked data is awesome, but it is too difficult for developers in its current form

Linked data (RDF / the semantic web) enables us to use the web as a large, decentralized graph database. Using links everywhere in data has amazing merits: links remove ambiguity, they enable exploration, they enable connected datasets. But the existing specs are too difficult to use, and that is harming adoption.

At my company Ontola, we’ve been working with linked data quite intensely for the last couple of years. We went all-in on RDF, and challenged ourselves to create software that communicates exclusively using it. That has been an inspiring, but at times also a frustrating journey. While building our e-democracy platform Argu.co, we had to solve many RDF related problems. How to properly model data in RDF? How to deal with sequences? How to communicate state changes? Which serialization format to use? How to convert RDF to HTML, and build a front-end? We tackled some of these problems by having a tight grip on the data that we create (e.g. we know the type of data, because we control the resources), and another part is creating new protocols, formats, tools, and libraries. But it took a long time, and it was hard. It’s been almost 15 years since the introduction of linked data, and its adoption has been slow. We know that some of its merits are undeniable, and we truly want the semantic web to succeed. I believe the lack of growth partially has to do with a lack of tooling, but also with some problems that lie in the RDF data model.

Atomic Data aims to take the best parts from RDF, and learn from the past to make a more developer-friendly, performant and reliable data model to achieve a truly linked web. Read more about how Atomic Data relates to RDF, and why these changes have been made.

Make standardization easier and cheaper

Standards for data sharing are great, but creating one can be very costly endeavor. Committees with stakeholders write endless documents describing the intricacies of domain models, which fields are allowed and which are required, and how data is serialized. In virtually all cases, these documents are only written for humans - and not for computers. Machine readable ways to describe data models like UML diagrams and OpenAPI specifications (also known as Swagger) help to have machine-readable descriptions, but these are still not really used by machines - they are mostly only used to generate visualizations for humans. This ultimately means that implementations of a standard have to be manually checked for compliance, which often results in small (yet important) differences that severely limit interoperability. These implementations will also often want to extend the original definitions, but they are almost always unable to describe what they have extended.

Standardizing with Atomic Data solves these issues. Atomic Data takes the semantic value of ontologies, and merges it with a machine-readable schemas. This makes standards created using Atomic Data easy to read for humans, and easy to validate for computers (which guarantees interoperability). Atomic Data has a highly standardized protocol for fetching data, which means that Atomic Schemas can link to each other, and re-use existing Properties. For developers (the people who need to actually implement and use the data that has been standardized), this means their job becomes easier. Because Properties have URLs, it becomes trivial to add new Properties that were initially not in the main specification, without sacrificing type safety and validation abilities.

Make it easier for developers to build feature-rich, interoperable apps

Every time a developer builds an application, they have to figure a lot of things out. How to design the API, how to implement forms, how to deal with authentication, authorization, versioning, search… A lot of time is essentially wasted on solving these issues time and time again.

By having a more complete, strict standard, Atomic Data aims to decrease this burden. Atomic Schema enables developers to easily share their datamodels, and re-use those from others. Atomic Commits helps developers to deal with versioning, history, undo and audit logs. Atomic Hierarchies provides an intuitive model for authorization and access control. And finally, the existing open source Atomic Data software (such as a server + database, a browser GUI, various libraries and React templates) help developers to have these features without having to do the heavy lifting themselves.

Strategy, history and roadmap for Atomic Data

We have the ambition to make the internet more interoperable. We want Atomic Data to be a commonly used specification, enabling a vast amount of applications to work together and share information. This means we need a lot of people to understand and contribute to Atomic Data. In this document, discuss the strategic principles we use, the steps we took, and the path forward. This should help you understand how and where you may be able to contribute.

Strategy for adoption

- Work on both specification and implementations (both client and server side) simultaneously to make sure all ideas are both easily explainable and properly implementable. Don’t design a spec with a large committee over many months, only to learn that it has implementation issues later on.

- Create libraries whenever possible. Enable other developers to re-use the technology in their own stacks. Keep the code as modular as possible.

- Document everything. Not just your APIs - also your ideas, considerations and decisions.

- Do everything public. All code is open source, all issues are publicly visible. Allow outsiders to learn everything and start contributing.

- Make an all-in-one workspace app that stand on its own. Atomic Data may be an abstract, technical story, but we still need end-user friendly applications that solve actual problems if we want to get as much adoption as possible.

- Let realistic use cases guide API design. Don’t fall victim to spending too much time for extremely rare edge-cases, while ignoring more common issues and wishes.

- Familiarity first. Make tools and specs that feel familiar, build libraries for popular frameworks, and stick to conventions whenever possible.

History

- First draft of specification (2020-06). Atomic Data started as an unnamed bundle of ideas and best practices to improve how we work with linked data, but quickly turned into a single (draft) specification. The idea was to start with a cohesive and easy to understand documentation, and use that as a stepping stone for writing the first code. After this, the code and specification should both be worked on simultaneously to make sure ideas are both easily explainable and properly implementable. Many of the earliest ideas were changed to make implementation easier.

- atomic-cli + atomic-lib (2020-07). The CLI functioned as the first platform to explore some of the most core ideas of Atomic Data, such as Properties and fetching.

atomic_libis the place where most logic resides. Written in Rust. - AtomicServer (2020-08). The server (using the same

atomic_libas the CLI) should be a fast, lightweight server that must be easy to set-up. Functions as a graph database with no dependencies. - Collections (2020-10). Allows users to perform basic queries, filtering, sorting and pagination.

- Commits (2020-11). Allow keeping track of an event-sourced log of all activities that mutate resources, which in turn allows for versioning and adding new types of indexes later on.

- JSON-AD (2021-02). Instead of the earlier proposed serialization format

.ad3, we moved to the more familiarjson-ad. - Atomic-Data-Browser (2021-02). We wanted typescript and react libraries, as well as a nice interactive GUI that works in the browser. It should implement all relevant parts of the specification.

- Endpoints (2021-03). Machine readable API endpoints (think Swagger / OpenAPI spec) for things like versioning, path traversal and more.

- Classes and Properties editable from the browser (2021-04). The data-browser is now powerful enough to use for managing the core ontological data of the project.

- Hierarchies & Invitations (2021-06). Users can set rights, structure Resources and invite new people to collaborate.

- Websockets (2021-08). Live synchronization between client and server.

- Use case: Document Editor (2021-09). Notion-like editor with real-time synchronization.

- Full-text search (2021-11). Powered by Tantivy.

- Authentication for read access (2021-11). Allows for private data.

- Desktop support (2021-12). Run Atomic-Server on the desktop, powered by Tauri. Easier install UX, system tray icon.

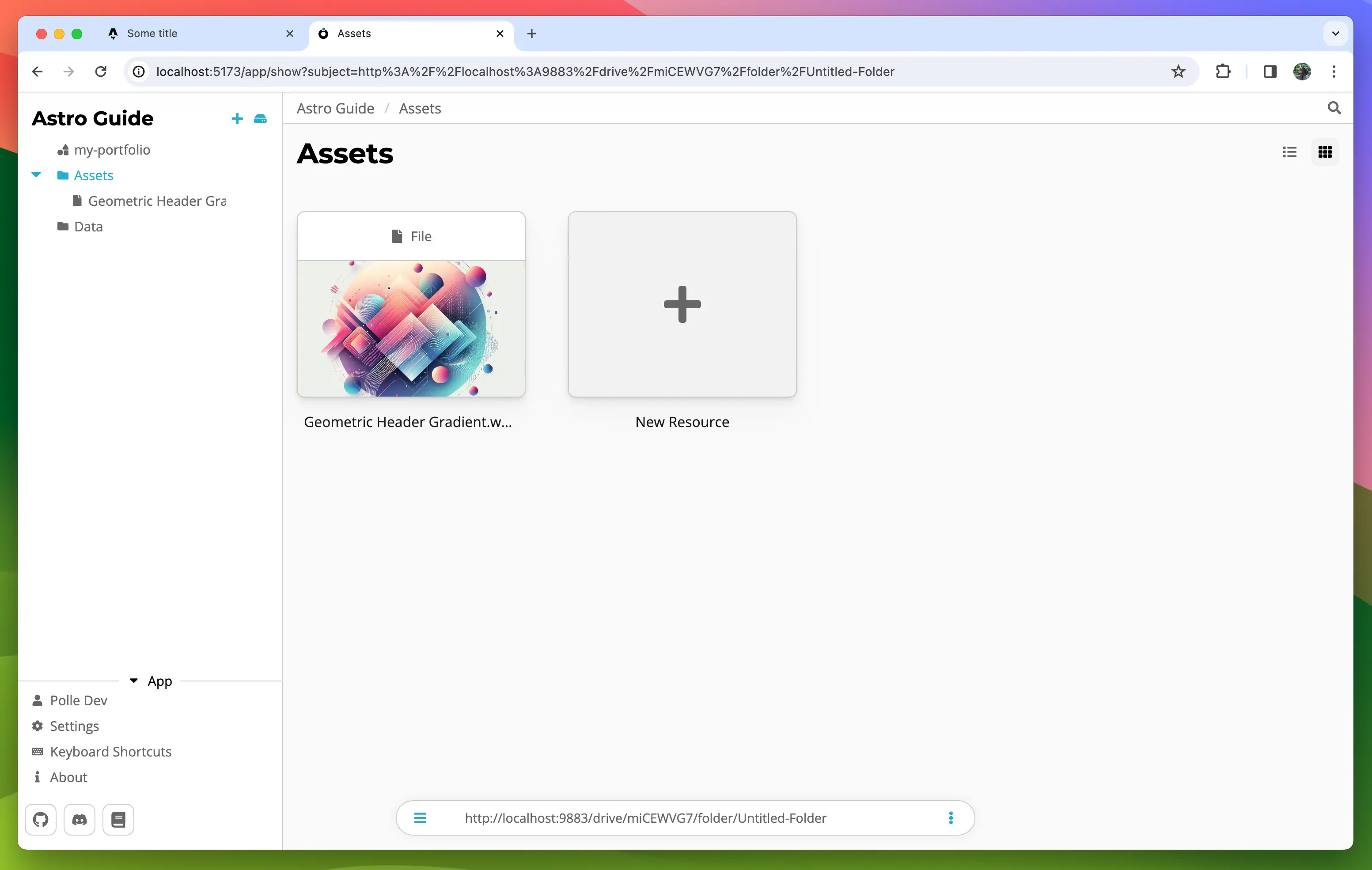

- File management (2021-12). Upload, download and view Files.

- Indexed queries (2022-01). Huge performance increase for queries. Allows for far bigger datasets.

- Use case: ChatRoom (2022-04). Group chat application. To make this possible, we had to extend the Commit model with a

pushaction, and allow Plugins to create new Commits. - JSON-AD Publishing and Importing (2022-08). Creating and consuming Atomic Data becomes a whole lot easier.

- @tomic/svelte (2022-12). Library for integrating Atomic Data with Svelte(Kit).

- Atomic Tables (2023-09). A powerful table editor with keyboard / copy / paste / sort support that makes it easier to model and edit data.

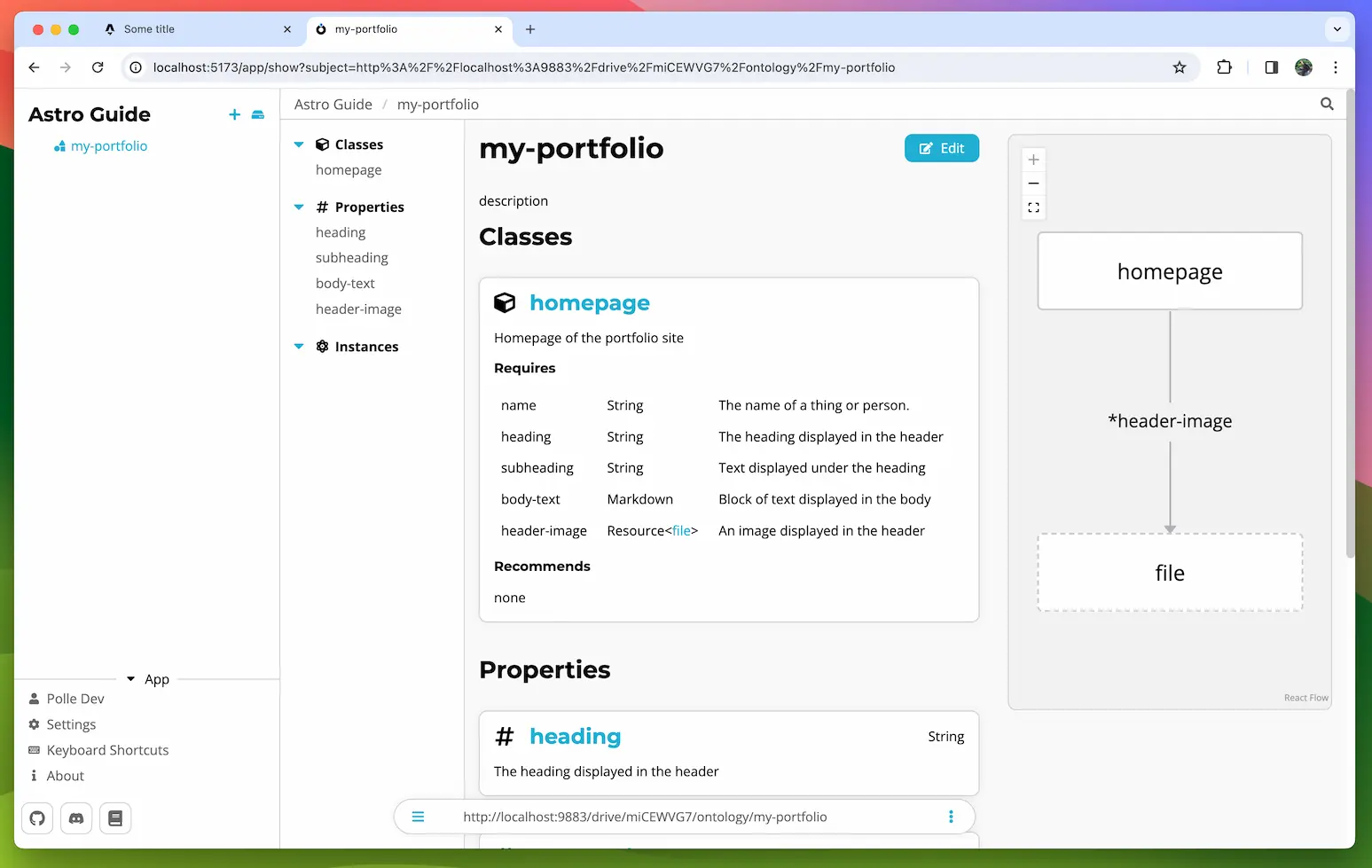

- Ontology Editor (2023-10). Easily create & edit Classes, Properties and Ontologies.

Where we’re at

Most of the specification seems to become pretty stable. The implementations are working better every day, although 1.0 releases are still quite a bit far away. At this point, the most important thing is to get developers to try out Atomic Data and provide feedback. That means not only make it easy to install the tools, but also allow people to make Atomic Data without using any of our own tools. That’s why we’re now working on the JSON-AD and Atomizer projects (see below).

Roadmap

- Video(s) about Atomic Data (2024 Q1). Explain what Atomic Data is, why we’re doing this, and how to get started.

- Improved document editor (2024). Better support for multi-line selection, more data types, etc.

- E-mail registration (2024 Q1). This makes it easier for users to get started, and de-emphasizes the importance of private key management, as user can register new Private Keys using their e-mail address.

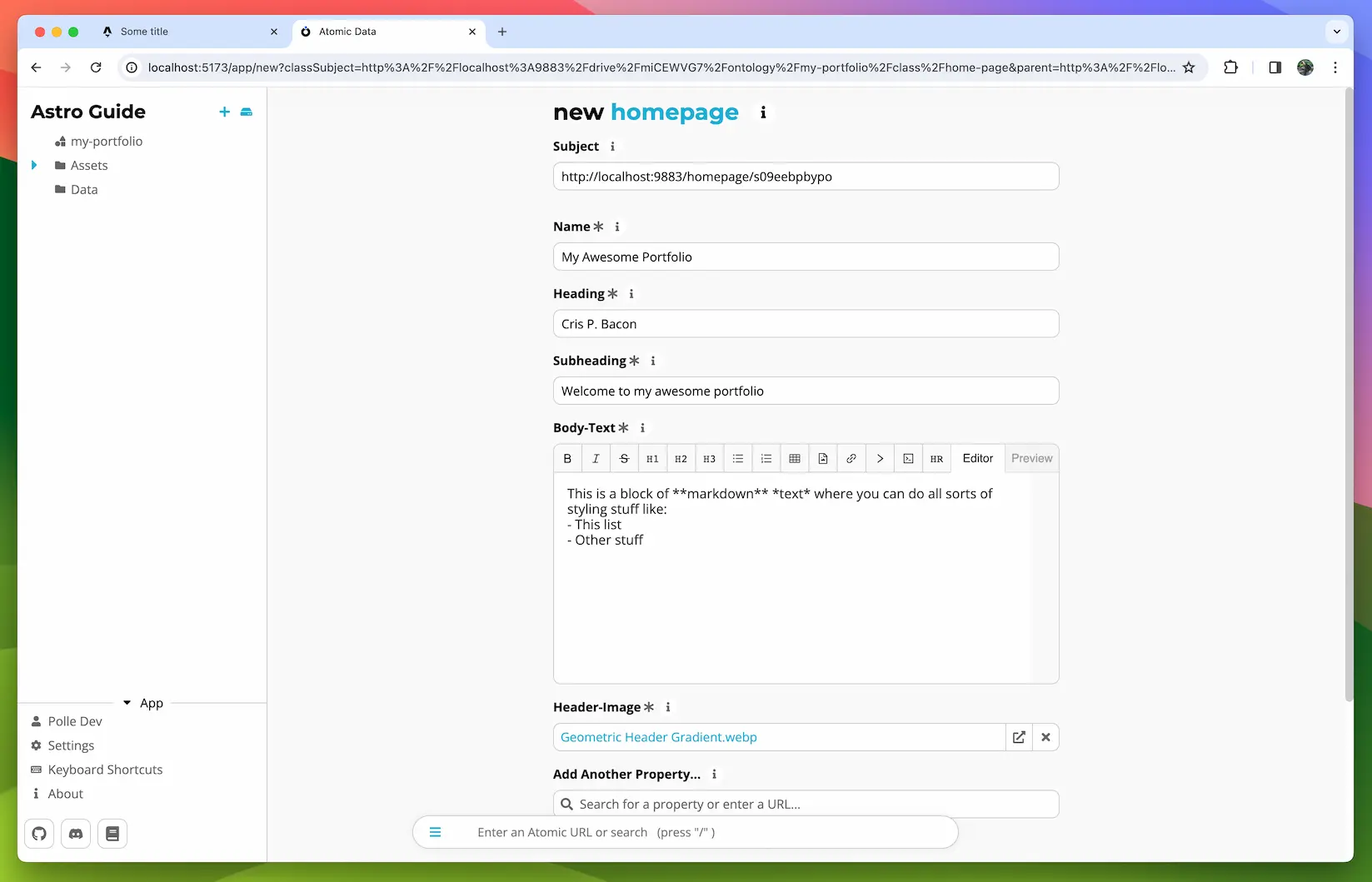

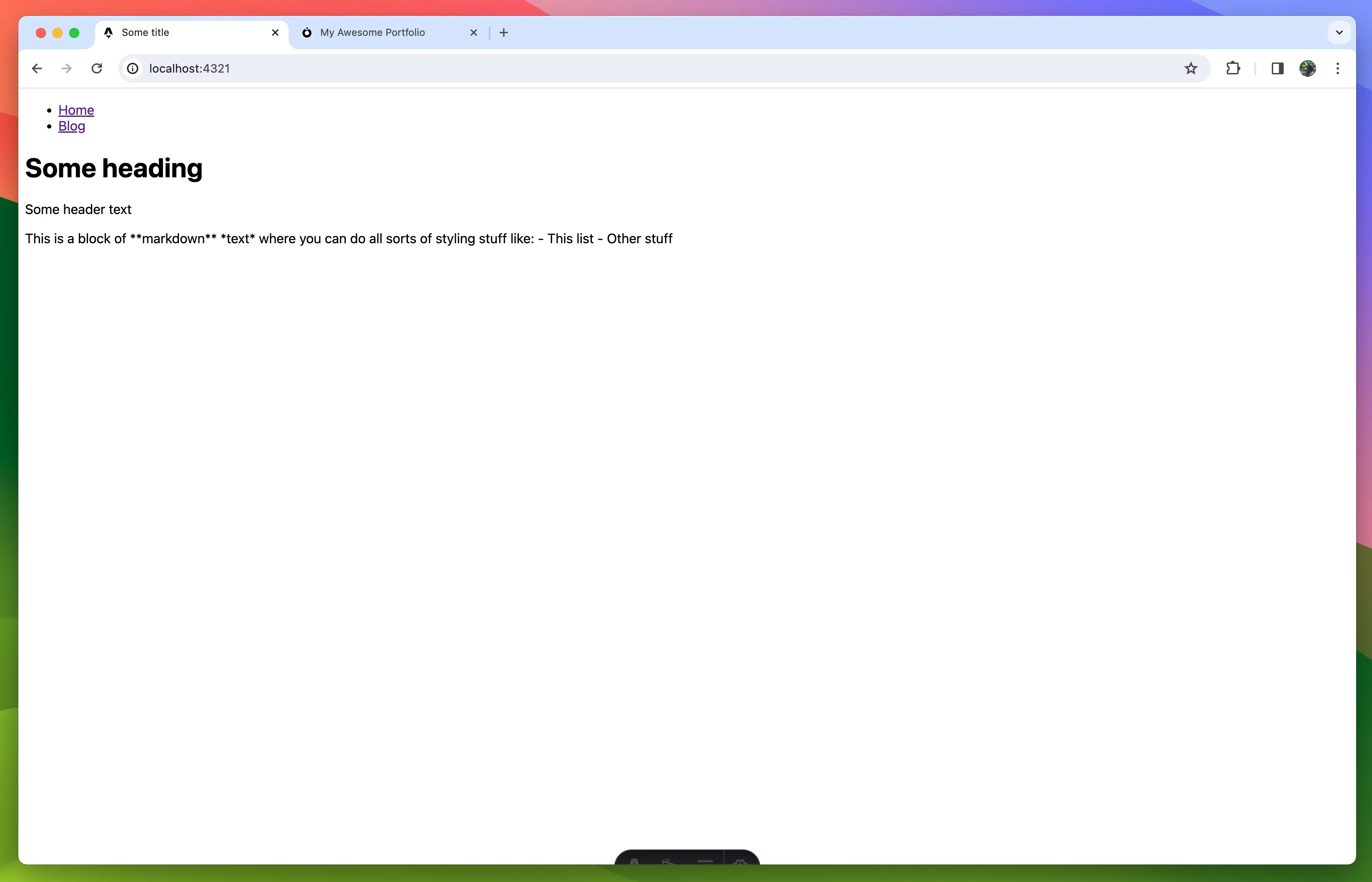

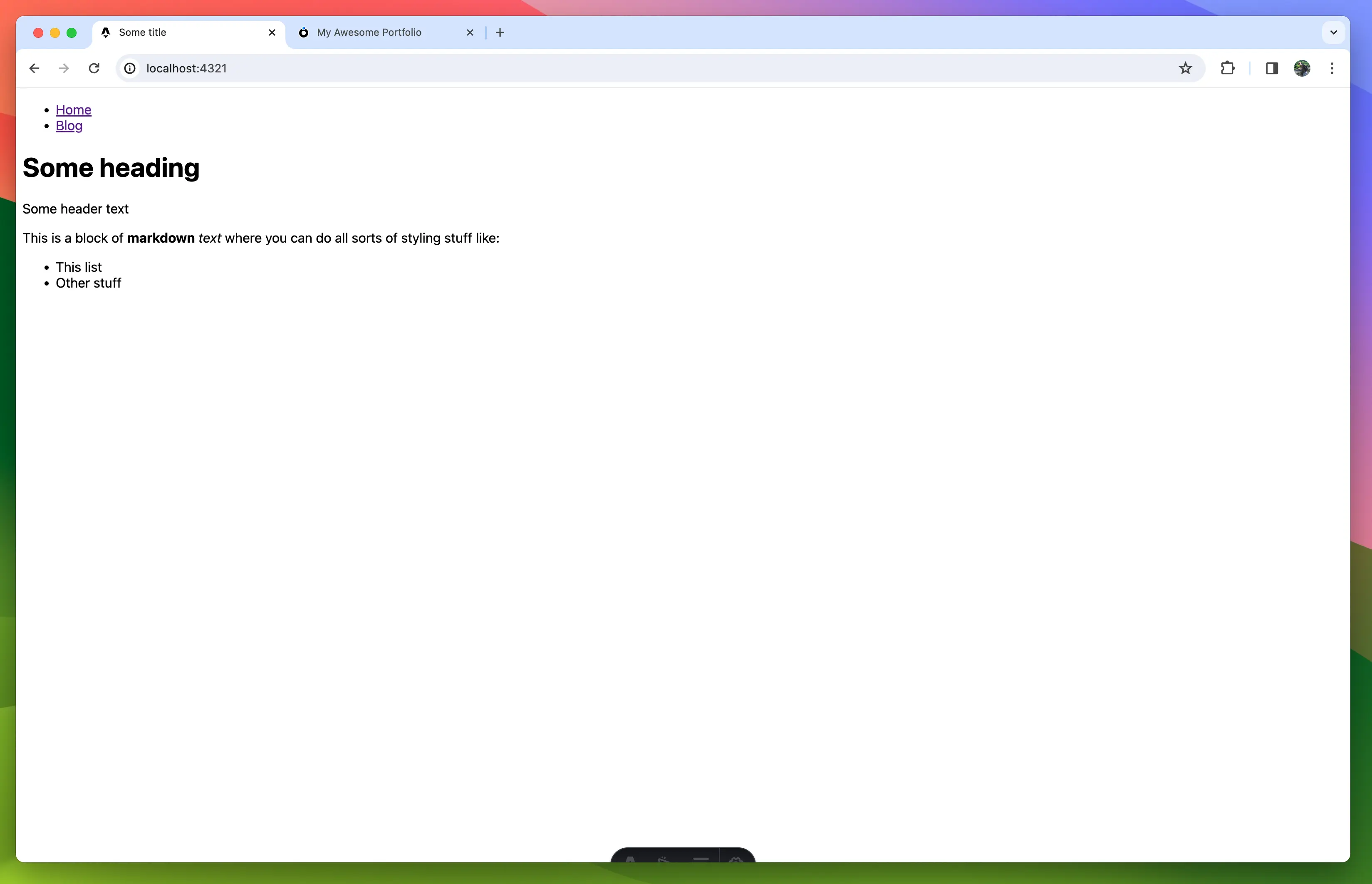

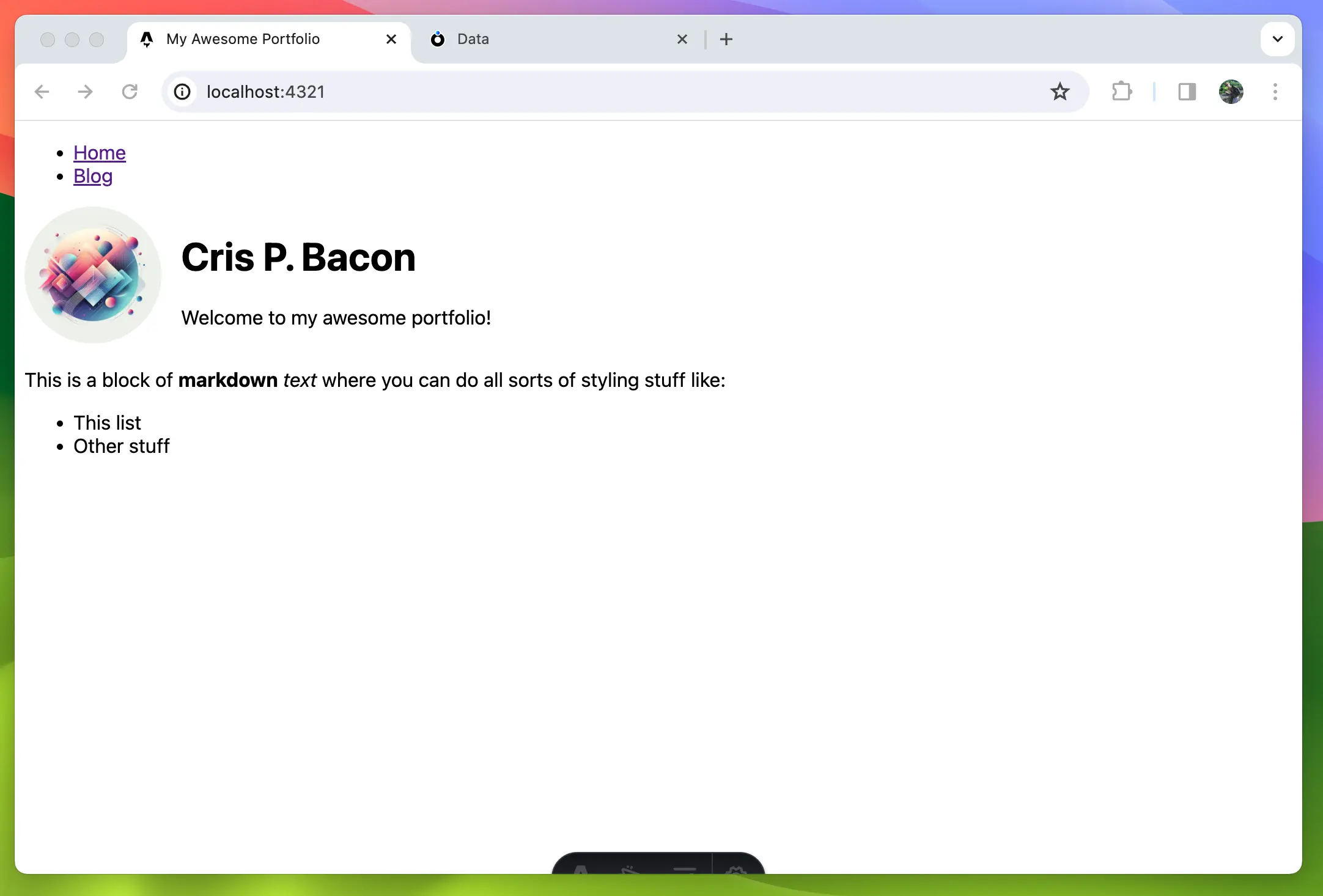

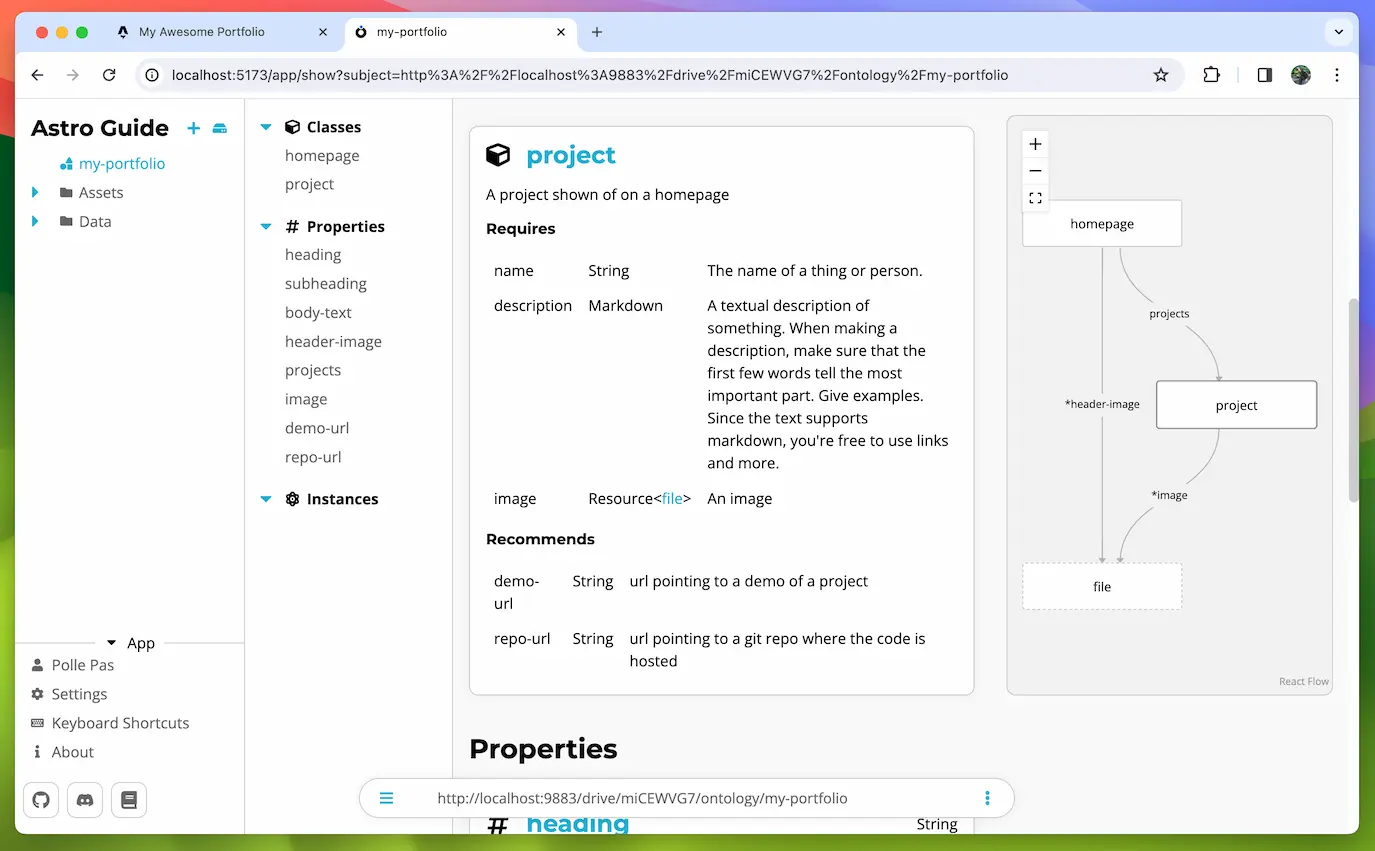

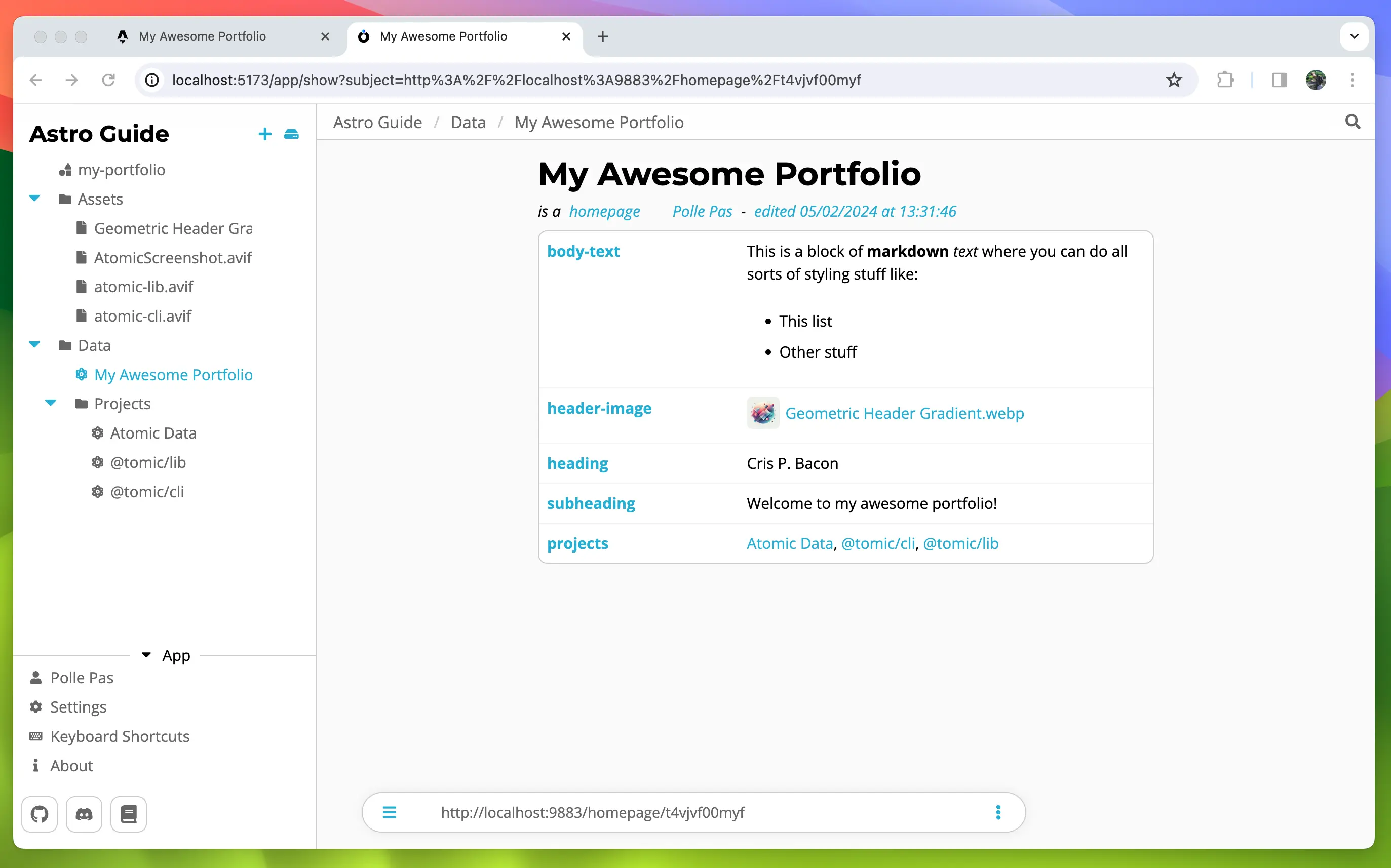

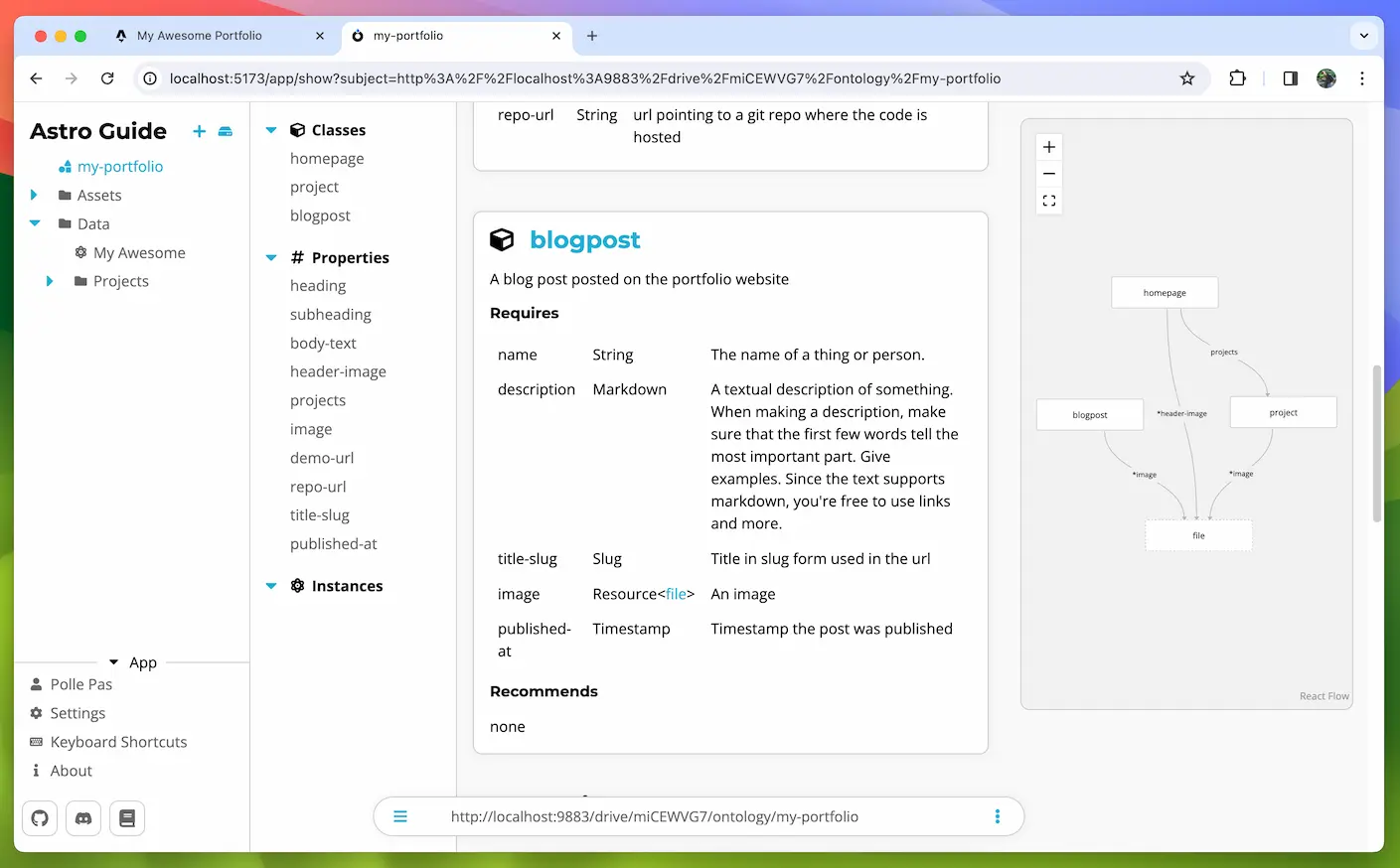

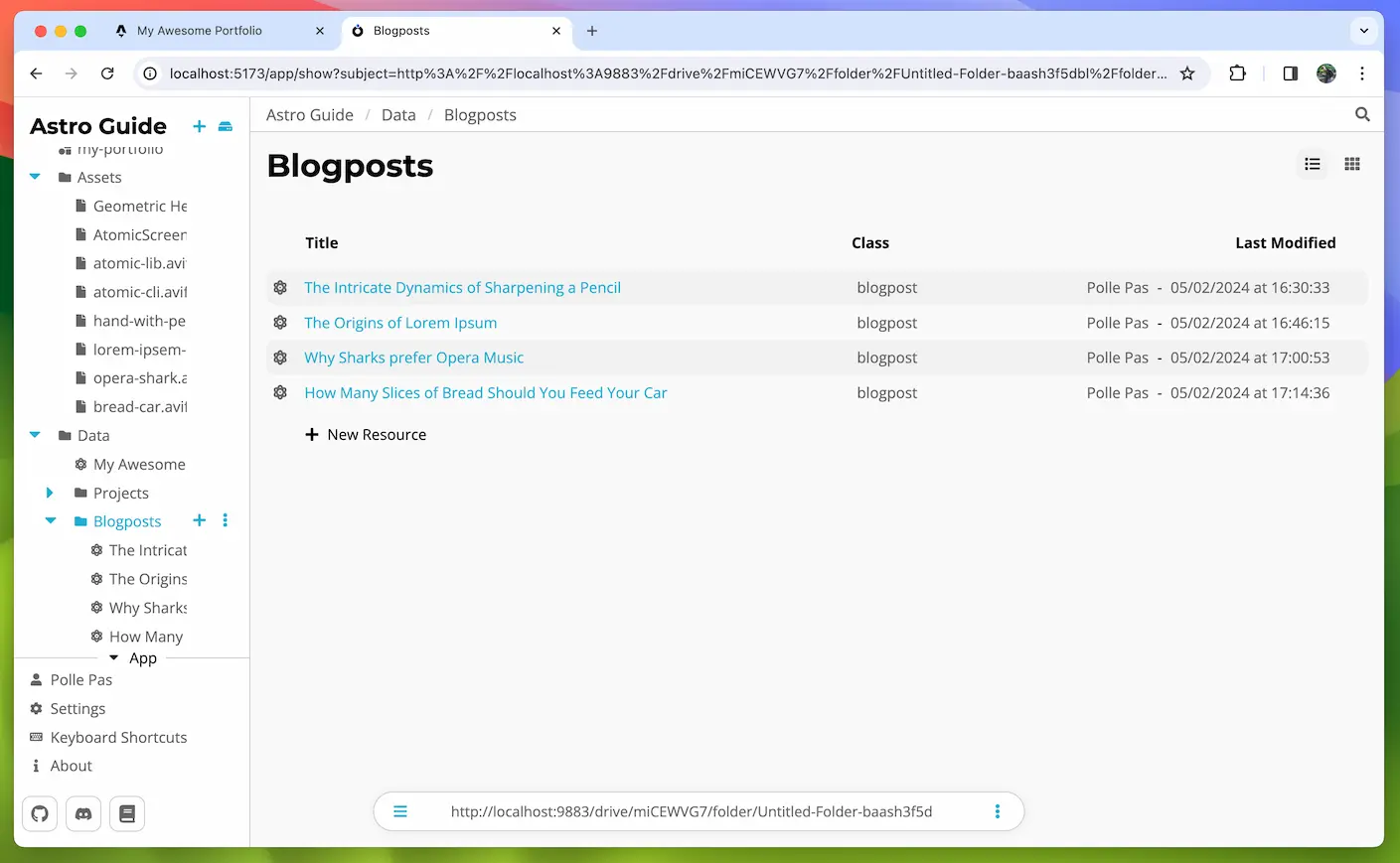

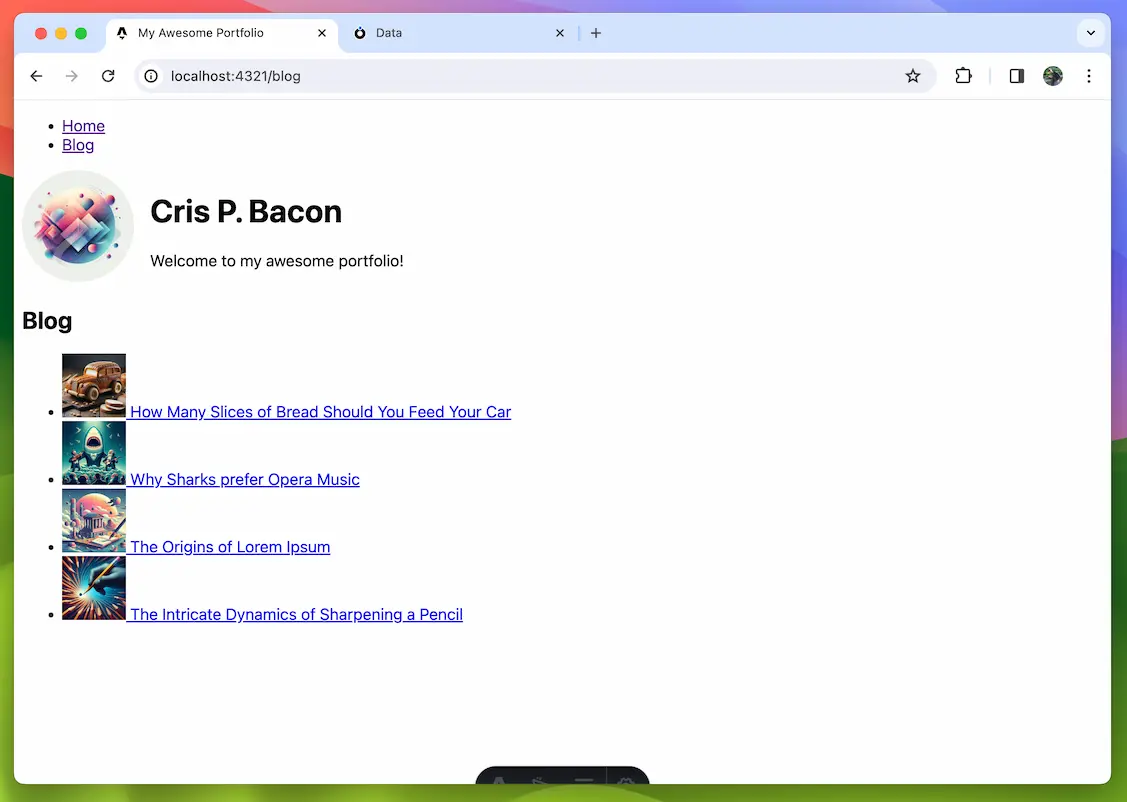

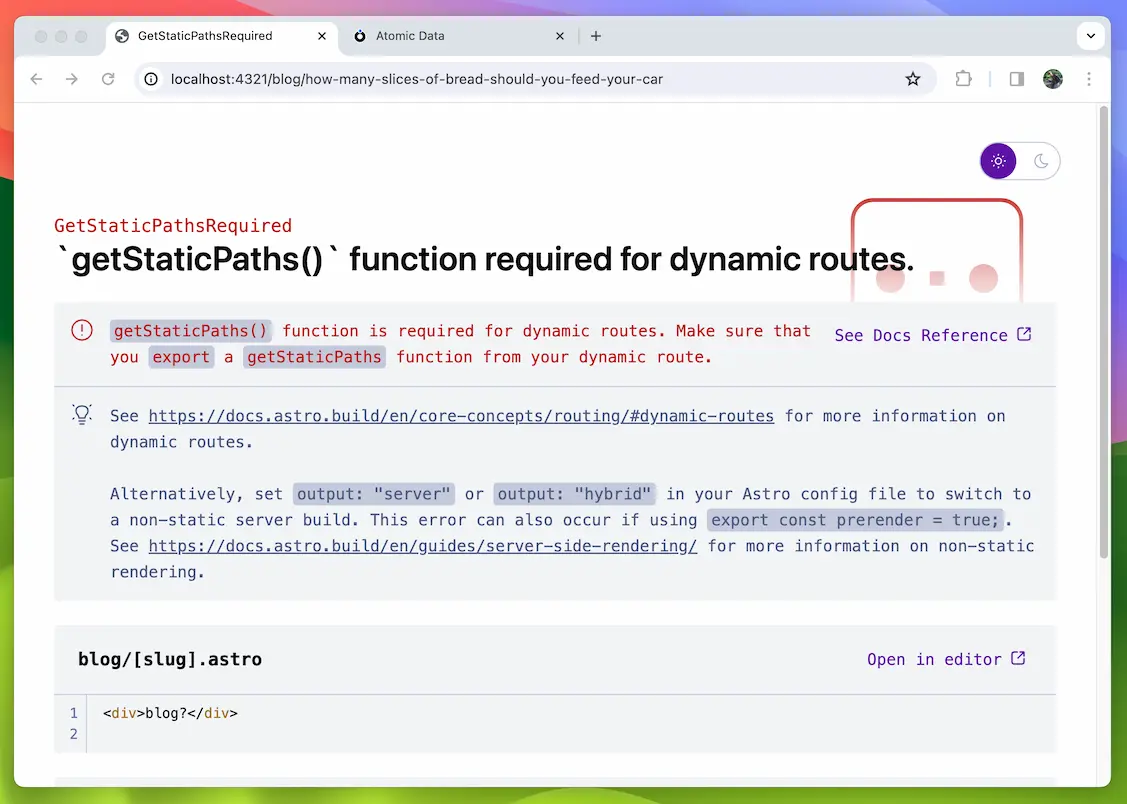

- Headless CMS tooling (2024). Use Atomic-Server to host and edit data that is being read by a front-end JAMSTACK type of tool, such as NextJS or SvelteKit.

- Atomizer (tbd). Import files and automatically turn these into Atomic Data.

- Atomic-server plugins (tbd). Let developers design new features without having to make PRs in Atomic-Server, and let users install apps without re-compiling (or even restarting) anything.

- Atomic-browser plugins (tbd). Create new views for Classes.

- 1.0 release (tbd). Mark the specification, the server (tracking issue) and the browser as stable. It is possible that the Spec will become 1.0 before any implementation is stable. Read the STATUS.md document for an up-to-date list of features that are already stable.

When (not) to use Atomic Data

When should you use Atomic Data

- Flexible schemas. When dealing with structured wikis or semantic data, various instances of things will have different attributes. Atomic Data allows any kind of property on any resource.

- Open data. Atomic Data is a bit harder to create than plain JSON, for example, but it is easier to re-use and understand. It’s use of URLs for properties makes data self-documenting.

- High interoperability requirements. When multiple groups of people have to use the same schema, Atomic Data provides easy ways to constrain and validate the data and ensure type safety.

- Connected / decentralized data. With Atomic Data, you use URLs to point to things on other computers. This makes it possible to connect datasets very explicitly, without creating copies. Very useful for decentralized social networks, for example.

- Auditability & Versioning. Using Atomic Commits, we can store all changes to data as transactions that can be replayed. This creates a complete audit log and history.

- JSON or RDF as Output. Atomic Data serializes to idiomatic, clean JSON as well as various RDF formats (Turtle / JSON-LD / n-triples / RDF/XML).

When not to use Atomic Data

- Internal use only. If you’re not sharing structured data, Atomic Data will probably only make things harder for you.

- Big Data. If you’re dealing with TeraBytes of data, you probably don’t want to use Atomic Data. The added cost of schema validation and the lack of distributed / large scale persistence tooling makes it not the right choice.

- Video / Audio / 3D. These should have unique, optimized binary representations and have very strict, static schemas. The advantages of atomic / linked data do little to improve this, unless it’s just for metadata.

AtomicServer and its features

AtomicServer is the reference implementation of the Atomic Data Core + Extended specification.

It was developed parallel to this specification, and it served as a testing ground for various ideas (some of which didn’t work, and some of which ended up in the spec).

AtomicServer is a real-time headless CMS, graph database server for storing and sharing typed linked data. It’s free, open source (MIT license), and has a ton of features:

- 🚀 Fast (less than 1ms median response time on my laptop), powered by actix-web and sled

- 🪶 Lightweight (8MB download, no runtime dependencies)

- 💻 Runs everywhere (linux, windows, mac, arm)

- 🔧 Custom data models: create your own classes, properties and schemas using the built-in Ontology Editor. All data is verified and the models are sharable using Atomic Schema

- ⚙️ Restful API, with JSON-AD responses.

- 🔎 Full-text search with fuzzy search and various operators, often <3ms responses. Powered by tantivy.

- 🗄️ Tables, with strict schema validation, keyboard support, copy / paste support. Similar to Airtable.

- 📄 Documents, collaborative, rich text, similar to Google Docs / Notion.

- 💬 Group chat, performant and flexible message channels with attachments, search and replies.

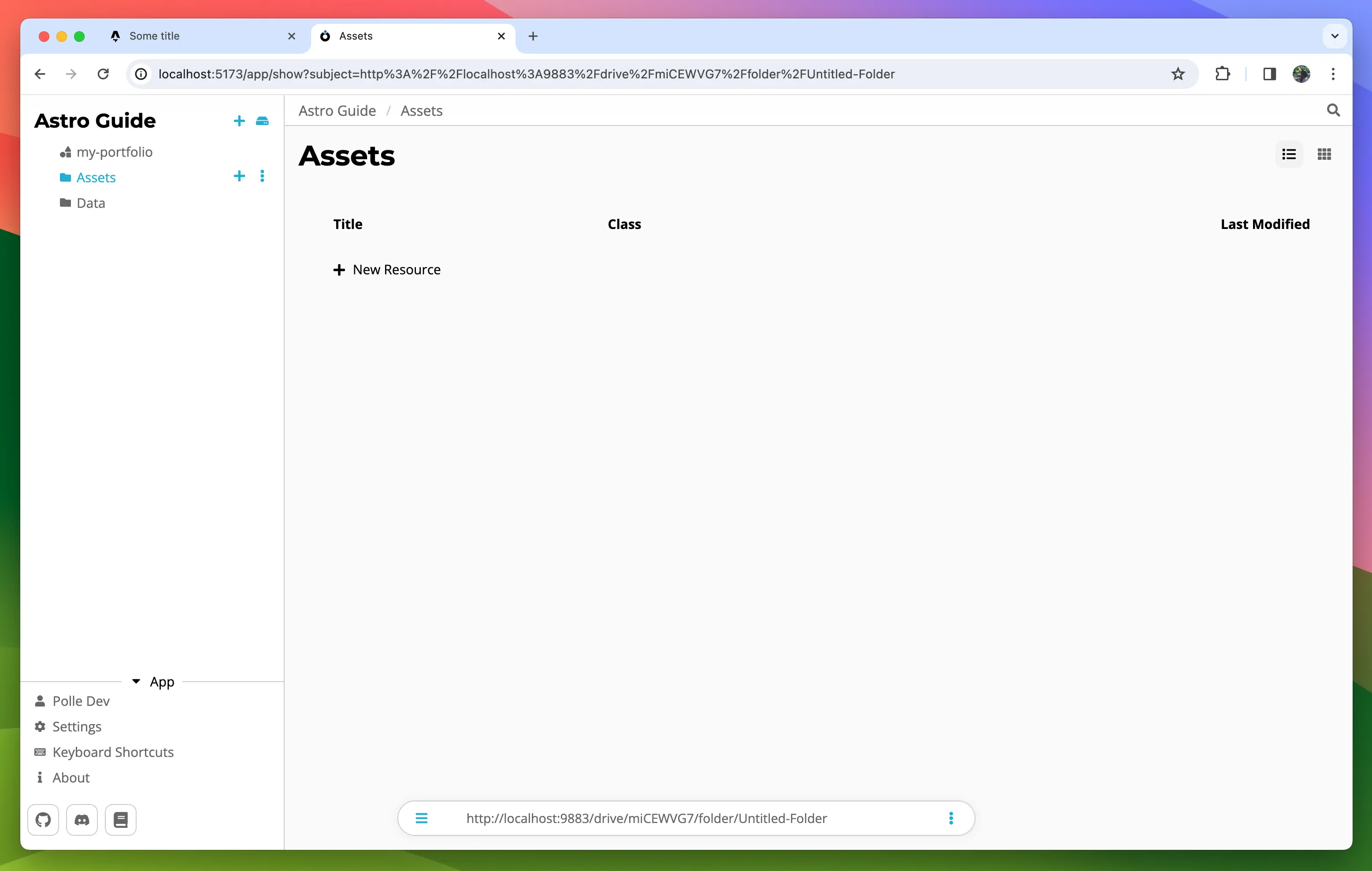

- 📂 File management: Upload, download and preview attachments.

- 💾 Event-sourced versioning / history powered by Atomic Commits

- 🔄 Real-time synchronization: instantly communicates state changes with a client. Build dynamic, collaborative apps using websockets (using a single one-liner in react or svelte).

- 🧰 Many serialization options: to JSON, JSON-AD, and various Linked Data / RDF formats (RDF/XML, N-Triples / Turtle / JSON-LD).

- 📖 Pagination, sorting and filtering queries using Atomic Collections.

- 🔐 Authorization (read / write permissions) and Hierarchical structures powered by Atomic Hierarchy

- 📲 Invite and sharing system with Atomic Invites

- 🌐 Embedded server with support for HTTP / HTTPS / HTTP2.0 (TLS) and Built-in LetsEncrypt handshake.

- 📚 Libraries: Javascript / Typescript, React, Svelte, Rust

Should you use AtomicServer?

When should you use AtomicServer

- You want a lightweight, fast, realtime and easy to use headless CMS with live updates, editors, modelling capabilities and an intuitive API

- You want realtime updates and collaboration functionality

- You want high performance: AtomicServer is incredibly fast and can handle thousands of requests per second.

- You want standalone app: no need for any external applications or dependencies (like a database / nginx).

- You want versioning or full-text search.

- You want to build a webapplication, and like working with using React or Svelte.

- You want to make (high-value) datasets as easily accessible as possible

- You want to specify and share a common vocabulary / ontology / schema for some specific domain or dataset. Example classes here.

- You want to use and share linked data, but don’t want to deal with most of the complexities of RDF, SPARQL, Triple Stores, Named Graphs and Blank Nodes.

- You are interested in re-decentralizing the web or want want to work with tech that improves data ownership and interoperability.

When not to use AtomicServer

- High-throughput numerical data / numerical analysis. AtomicServer does not have aggregate queries.

- If you need high stability, look further (for now). This is beta sofware and can change.

- You’re dealing with very sensitive / private data. The built-in authorization mechanisms are relatively new and not rigorously tested. The database itself is not encrypted.

- Complex query requirements. We have queries with filters and features for path traversal, but it may fall short. Check out NEO4j, Apache Jena or maybe TerminusDB.

Up next

Next, we’ll get to run AtomicServer!

Setup / installation

You can run AtomicServer in different ways:

- Using docker (probably the quickest):

docker run -p 80:80 -p 443:443 -v atomic-storage:/atomic-storage joepmeneer/atomic-server - From a published binary

- Using Cargo from crates.io:

cargo install atomic-server - Manually from source

If you want to run AtomicServer locally as a developer / contributor, check out the Contributors guide.

1. Run using docker

- Run:

docker run -p 80:80 -p 443:443 -v atomic-storage:/atomic-storage joepmeneer/atomic-serverThedockerfileis located in the project root, above thisserverfolder. - See dockerhub for a list of all the available tags (e.g. the

developtag for the very latest version) - If you want to make changes (e.g. to the port), make sure to pass the relevant CLI options (e.g.

--port 9883). - If you want to update, run

docker pull joepmeneer/atomic-serverand docker should fetch the latest version. - By default, docker downloads the

latesttag. You can find other tags here.

2. Run pre-compiled binary

Get the binaries from the releases page and copy them to your bin folder.

3. Install using cargo

# Install from source using cargo, and add it to your path

# If things go wrong, check out `Troubleshooting compiling from source:` below

cargo install atomic-server --locked

# Check the available options and commands

atomic-server --help

# Run it!

atomic-server

4. Compile from source

# make sure pnpm is installed and available in path! https://pnpm.io/

pnpm --version

git clone git@github.com:atomicdata-dev/atomic-server.git

cd atomic-server/server

cargo run

If things go wrong while compiling from source:

# If cc-linker, pkg-config or libssl-dev is not installed, make sure to install them

sudo apt-get install -y build-essential pkg-config libssl-dev --fix-missing

Initial setup and configuration

- You can configure the server by passing arguments (see

atomic-server --help), or by setting ENV variables. - The server loads the

.envfrom the current path by default. Create a.envfile from the default template in your current directory withatomic-server generate-dotenv - After running the server, check the logs and take note of the

Agent SubjectandPrivate key. You should use these in theatomic-cliand atomic-data-browser clients for authorization. - A directory is made:

~/.config/atomic, which stores your newly created Agent keys, the HTTPS certificates other configuration. Depending on your OS, the actual data is stored in different locations. See use theshow-configcommand to find out where, if you need the files. - Visit

http://localhost:9883/setupto register your first (admin) user. You can use an existing Agent, or create a new one. Note that if you create alocalhostagent, it cannot be used on the web (since, well, it’s local). More info and steps in getting started with the GUI.

Running using a tunneling service (easy mode)

If you want to make your -server available on the web, but don’t want (or cannot) deal with setting up port-forwarding and DNS, you can use a tunneling service. It’s the easiest way to get your server to run on the web, yet still have full control over your server.

- Create an account on some tunneling service, such as tunnelto.dev (which we will use here). Make sure to reserve a subdomain, you want it to remain stable.

tunnelto --port 9883 --subdomain joepio --key YOUR_API_KEYatomic-server --domain joepio.tunnelto.dev --custom-server-url 'https://joepio.tunnelto.dev' --initialize

HTTPS Setup on a VPS (static IP required)

You’ll probably want to make your Atomic Data available through HTTPS on some server. You can use the embedded HTTPS / TLS setup powered by LetsEncrypt, acme_lib and rustls.

You can do this by passing these flags:

Run the server: atomic-server --https --email some@example.com --domain example.com.

You can also set these things using a .env or by setting them some other way.

Make sure the server is accessible at ATOMIC_DOMAIN at port 80, because Let’s Encrypt will send an HTTP request to this server’s /.well-known directory to check the keys.

The default Ports are 9883 for HTTP, and 9884 for HTTPS.

If you’re running the server publicly, set these to 80 and 433: atomic-server --https --port 80 --port-https 433.

It will now initialize the certificate.

Read the logs, watch for errors.

HTTPS certificates are automatically renewed when the server is restarted, and the certs are 4 weeks or older.

They are stored in your .config/atomic/ dir.

HTTPS Setup using external HTTPS proxy

Atomic-server has built-in HTTPS support using letsencrypt, but there are usecases for using external TLS source (e.g. Traeffik / Nginx / Ingress).

To do this, users need to set these ENVS:

ATOMIC_DOMAIN=example.com

# We'll use this regular HTTP port, not the HTTPS one

ATOMIC_PORT=80

# Disable built-in letsencrypt

ATOMIC_HTTPS=false

# Since Atomic-server is no longer aware of the existence of the external HTTPS service, we need to set the full URL here:

ATOMIC_SERVER_URL=https://example.com

Using systemd to run Atomic-Server as a service

In Linux operating systems, you can use systemd to manage running processes.

You can configure it to restart automatically, and collect logs with journalctl.

Create a service:

nano /etc/systemd/system/atomic.service

Add this to its contents, make changes if needed:

[Unit]

Description=Atomic-Server

#After=network.targetdd

StartLimitIntervalSec=0[Service]

[Service]

Type=simple

Restart=always

RestartSec=1

User=root

ExecStart=/root/atomic-server

WorkingDirectory=/root/

EnvironmentFil=/root/.env

[Install]

WantedBy=multi-user.target

# start / status / restart commands:

systemctl start atomic

systemctl status atomic

systemctl restart atomic

# show recent logs, follow them on screen

journalctl -u atomic.service --since "1 hour ago" -f

AtomicServer CLI options / ENV vars

(run atomic-server --help to see the latest options)

Create, share and model Atomic Data with this graph database server. Run atomic-server without any arguments to start the server. Use --help to learn about the options.

Usage: atomic-server [OPTIONS] [COMMAND]

Commands:

export

Create and save a JSON-AD backup of the store

import

Import a JSON-AD file or stream to the store. By default creates Commits for all changes, maintaining version history. Use --force to allow importing other types of files

generate-dotenv

Creates a `.env` file in your current directory that shows various options that you can set

show-config

Returns the currently selected options, based on the passed flags and parsed environment variables

reset

Danger! Removes all data from the store

help

Print this message or the help of the given subcommand(s)

Options:

--initialize

Recreates the initial Invite for creating a new Root User, prints it to console. Also re-runs various populate commands, and re-builds the index

[env: ATOMIC_INITIALIZE=]

--rebuild-indexes

Re-builds the indexes. Parses all the resources. Do this when updating requires it, or if you have issues with Collections / Queries / Search

[env: ATOMIC_REBUILD_INDEX=]

--development

Use staging environments for services like LetsEncrypt

[env: ATOMIC_DEVELOPMENT=]

--domain <DOMAIN>

The origin domain where the app is hosted, without the port and schema values

[env: ATOMIC_DOMAIN=]

[default: localhost]

-p, --port <PORT>

The port where the HTTP app is available. Set to 80 if you want this to be available on the network

[env: ATOMIC_PORT=]

[default: 9883]

--port-https <PORT_HTTPS>

The port where the HTTPS app is available. Set to 443 if you want this to be available on the network

[env: ATOMIC_PORT_HTTPS=]

[default: 9884]

--ip <IP>

The IP address of the server. Set to :: if you want this to be available to other devices on your network

[env: ATOMIC_IP=]

[default: ::]

--https

Use HTTPS instead of HTTP. Will get certificates from LetsEncrypt fully automated

[env: ATOMIC_HTTPS=]

--https-dns

Initializes DNS-01 challenge for LetsEncrypt. Use this if you want to use subdomains

[env: ATOMIC_HTTPS_DNS=]

--email <EMAIL>

The contact mail address for Let's Encrypt HTTPS setup

[env: ATOMIC_EMAIL=]

--script <SCRIPT>

Custom JS script to include in the body of the HTML template

[env: ATOMIC_SCRIPT=]

[default: ]

--config-dir <CONFIG_DIR>

Path for atomic data config directory. Defaults to "~/.config/atomic/""

[env: ATOMIC_CONFIG_DIR=]

--data-dir <DATA_DIR>

Path for atomic data store folder. Contains your Store, uploaded files and more. Default value depends on your OS

[env: ATOMIC_DATA_DIR=]

--public-mode

CAUTION: Skip authentication checks, making all data publicly readable. Improves performance

[env: ATOMIC_PUBLIC_MODE=]

--server-url <SERVER_URL>

The full URL of the server. It should resolve to the home page. Set this if you use an external server or tunnel, instead of directly exposing atomic-server. If you leave this out, it will be generated from `domain`, `port` and `http` / `https`

[env: ATOMIC_SERVER_URL=]

--log-level <LOG_LEVEL>

How much logs you want. Also influences what is sent to your trace service, if you've set one (e.g. OpenTelemetry)

[env: RUST_LOG=trace]

[default: info]

[possible values: warn, info, debug, trace]

--trace <TRACE>

How you want to trace what's going on with the server. Useful for monitoring performance and errors in production. Combine with `log_level` to get more or less data (`trace` is the most verbose)

[env: ATOMIC_TRACING=opentelemetry]

[default: stdout]

Possible values:

- stdout:

Log to STDOUT in your terminal

- chrome:

Create a file in the current directory with tracing data, that can be opened with the chrome://tracing/ URL

- opentelemetry:

Log to a local OpenTelemetry service (e.g. Jaeger), using default ports

--slow-mode

Introduces random delays in the server, to simulate a slow connection. Useful for testing

[env: ATOMIC_SLOW_MODE=]

-h, --help

Print help information (use `-h` for a summary)

-V, --version

Print version information

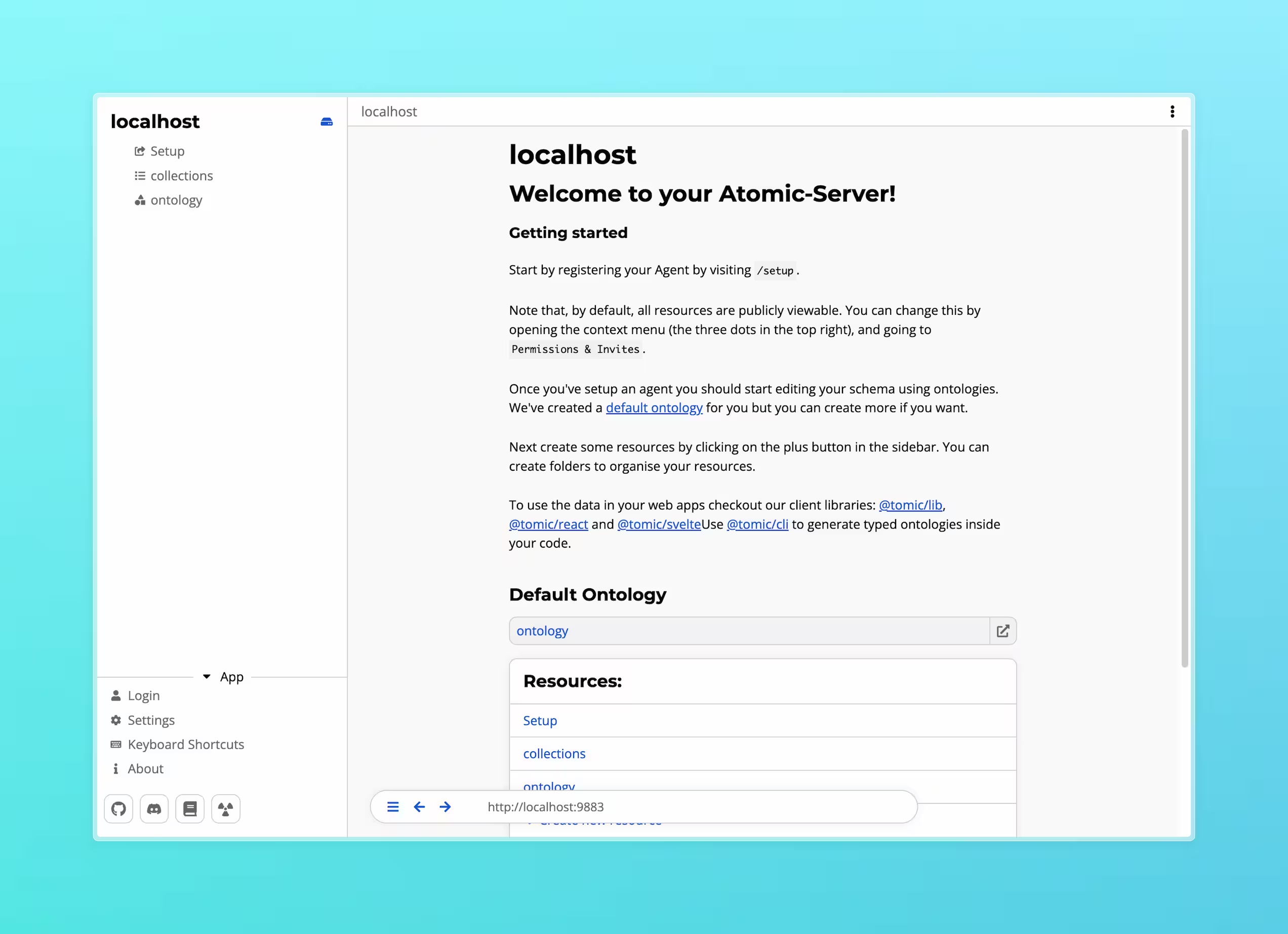

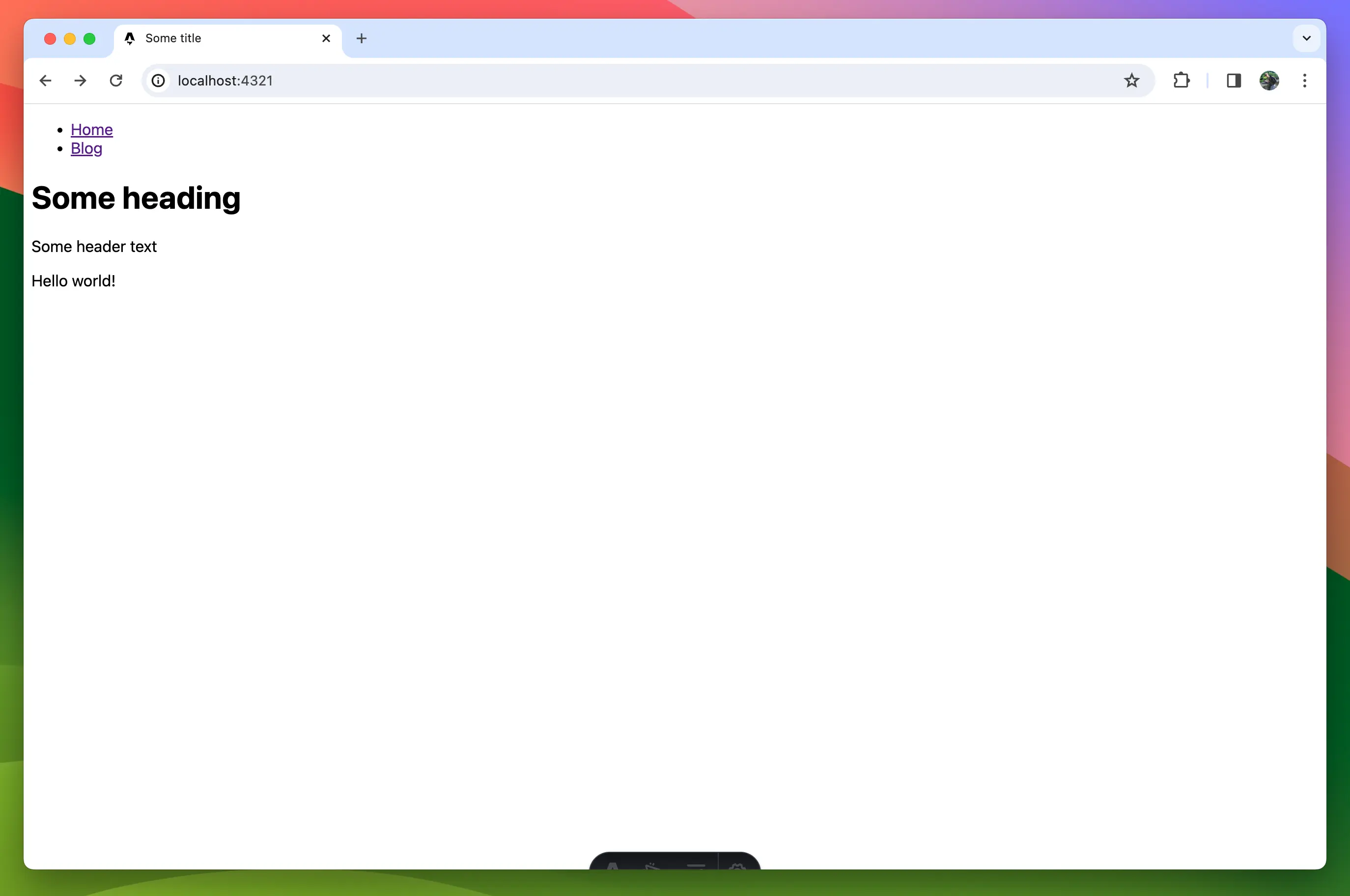

Using the AtomicServer GUI

After running the server, open it in your browser.

By default, that’s at http://localhost:9883.

Fun fact:

⚛is HTML entity code for the Atom icon: ⚛

The first screen should show you your main Drive. You can think of this as the root of the server. It is the resource hosted at the root URL, effectively being the home page of your server.

In the sidebar you will see a list of resources in the current drive. At the start these will be:

- The setup invite that’s used to configure the root agent.

- A resource named

collections. This is a group of collections that shows collections for all classes in the server, essentially a list of all resources. - The default ontology. Ontologies are used to define new classes and properties and show to relation between them.

Creating an agent

To create data in AtomicServer you’ll need an agent.

An agent is like a user account, it signs the changes (commits) you make to data so that others can verify that you made them.

Agents are identified by a DID derived from their public key (did:ad:{publicKey}), so they can be used on any AtomicServer without needing to be registered first.

To get started, you can use the demo invite on atomicdata.dev, or the /setup invite on your own server.

Click the “Accept as new user” button. The app will generate a key pair and your Agent will be created. Navigate to the User Settings page to find your agent secret. This secret is what you use to login, so keep it somewhere safe, like in a password manager. If you lose it you won’t be able to recover your account.

Setting up the root Agent

Next, we’ll set up the root Agent that has write access to the Drive.

If you’ve already accepted the /setup invite, you can skip this step.

Head to the setup page by selecting it in the sidebar.

You’ll see a button that either says Accept as <Your agent> or Accept as new user.

If it says “as new user”, click on login, paste your secret in the input field and return to the invite page.

After clicking the accept button you’ll be redirected to the home page and you will have write access to the Drive.

You can verify this by hovering over the description field, clicking the edit icon, and making a few changes.

You can also press the menu button (three dots, top left) and press Data view to see your agent after the write field.

Note that you can now edit every field.

The /setup invite can only be used once and will therefore not work anymore.

If you want to re-enable the invite to change the root agent you can start AtomicServer with the --initialize flag.

Creating your first Atomic Data

Now that everything is up and running you can start creating some resources. To create a new resource, click the + button in the sidebar. You will be presented with a list of resource types to choose from like Tables, Folders, Documents etc. You can also create your own types by using ontologies.

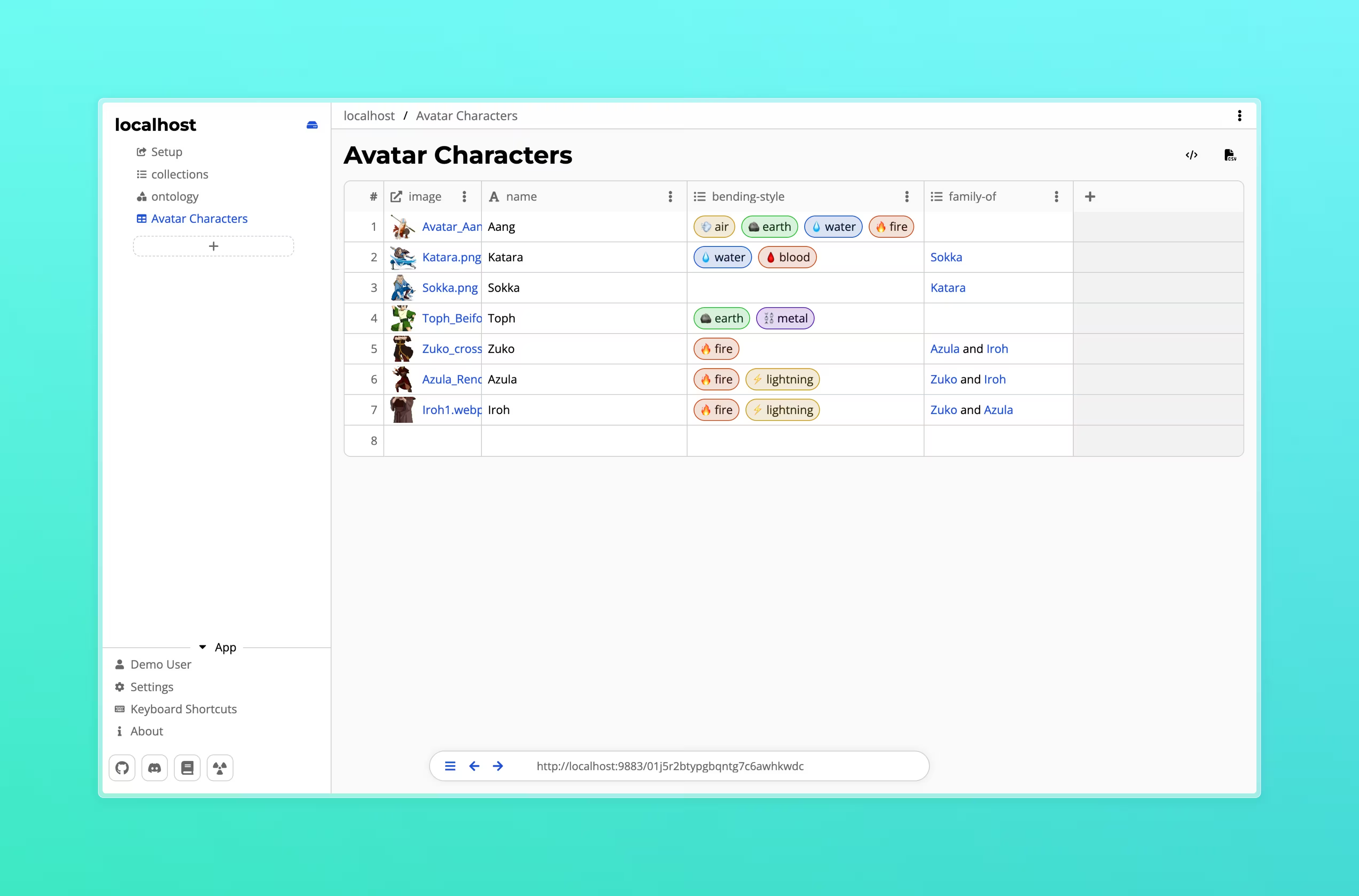

Tables

Tables are a way to create and group large amounts of structured data.

Tables consist of rows of resources that share the same parent and class. The properties of that class are represented as columns in the table. This means that each column is type-safe, a number column can not contain text data for example.

Creating a table

To create a table, click the “+” button in the sidebar or a folder and select “Table”.

A dialog will appear prompting you to enter a name.

This name will be used as the title of the table as well as the name for the underlying class of the rows.

This new class will already have a name property. Using the name property as titles on your resources is a best practice as it helps with compatibility between other tools and makes your resources findable by AtomicServer’s search functionality.

If you do not want to use the name property, you can remove it by clicking on the three dots in the column header and selecting “Remove”.

While creating a new table you can also choose to use an existing class by selecting “Use existing class” in the dialog and selecting the desired class from the dropdown.

Classes created by tables are automatically added to the default ontology of the drive. Same goes for the columns of the table. If you chose to use an existing class, any columns created will be added to the ontology containing that class.

Features

- Rearange columns: You can drag and drop columns to rearrange them.

- Resize columns: You can resize columns by dragging the edges of the column header.

- Sort rows: Click on a column header to sort the rows by that column.

- Fast keyboard navigation: Use the arrow keys to navigate the table with hotkeys similar to Excel.

- Copy & paste multiple cells: You can copy and paste multiple cells by selecting them and using

Ctrl/Cmd + CandCtrl/Cmd + V. Pasting also works across different tables and even different applications that support HTML Table data (Most spreadsheet applications). - Export data to CSV: You can export the data of a table to a CSV file by clicking the “Export” button in the top right.

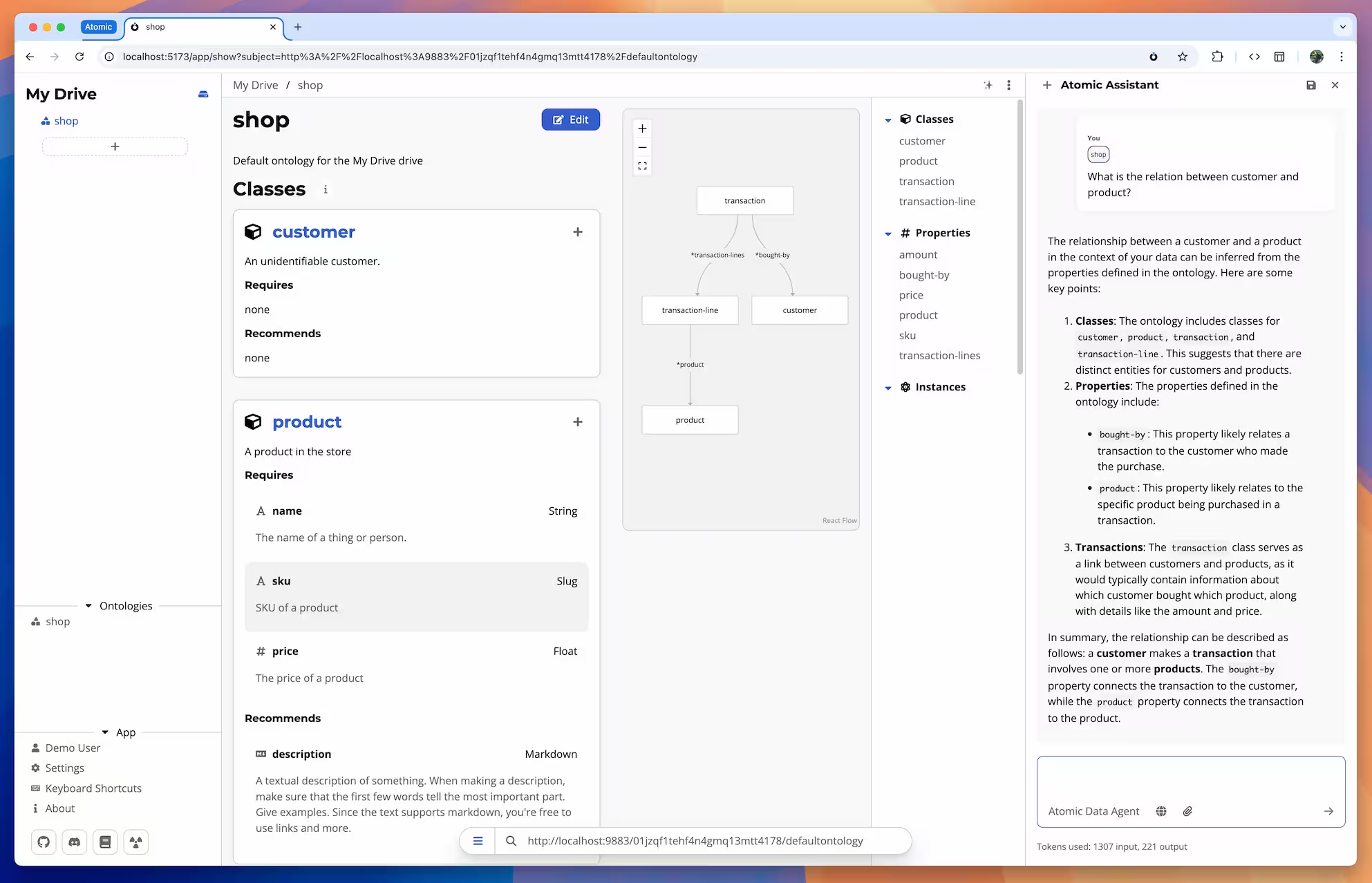

AI Features in AtomicServer

AtomicServer offers powerful AI capabilities to help you automate tasks, generate content, and interact with your data in new ways. It is also just a great general purpose AI client. And if you want nothing to do with AI, you can disable it completely in the settings.

AtomicServer integrates with large language models (LLMs) via two main providers:

- OpenRouter: A cloud-based API that gives access to a wide range of commercial and open-source models (e.g., GPT-4, Claude, Mixtral, etc.).

- Ollama: A self-hosted, local LLM server that runs models on your own hardware for privacy and offline use.

Configuring AI

Before you start using the AI features you will need to configure an AI provider. This is straightforward and can be done on the settings page.

OpenRouter

If you want to use OpenRouter, you will need an OpenRouter account with some credits. You can link it to AtomicServer by clicking the “Login with OpenRouter” button or pasting your API key in the text field.

Ollama

Download and install Ollama for your desired OS. Download some models from the terminal by entering.

ollama pull <model-name>

Next start the server:

ollama serve

Now in your AtomicServer go to the settings page, scroll down to the AI settings and under “AI Providers” click on Ollama.

There you can enter the URL of your Ollama server.

If you are running this server on the same machine as your browser, you can use http://localhost:11434/api as the URL.

Next you need to configure an agent to use the model. To do this go to an AI chat or open the AI sidebar and click on the agent in the chat input.

Click on the edit button and change the model to your desired Ollama model.

Using AI

AI Sidebar

The fastest way to use AI is with the AI sidebar. Open it by clicking on the ✨ button in the top right corner. Chats in the AI sidebar do not persist and not shared with other users. If you want to save your chat for later reference or to continue at a later point, you can save the chat as a resource by hitting the save button in the top right corner. Once saved the chat can be used like any other resource, you can share it with other users and reference it in other resources.

Creating an AI Chat resource.

You can also create AI chat resources without going through the AI sidebar first.

To create a full page AI chat that persists, create a new resource and click the ✨ ai-chat button.

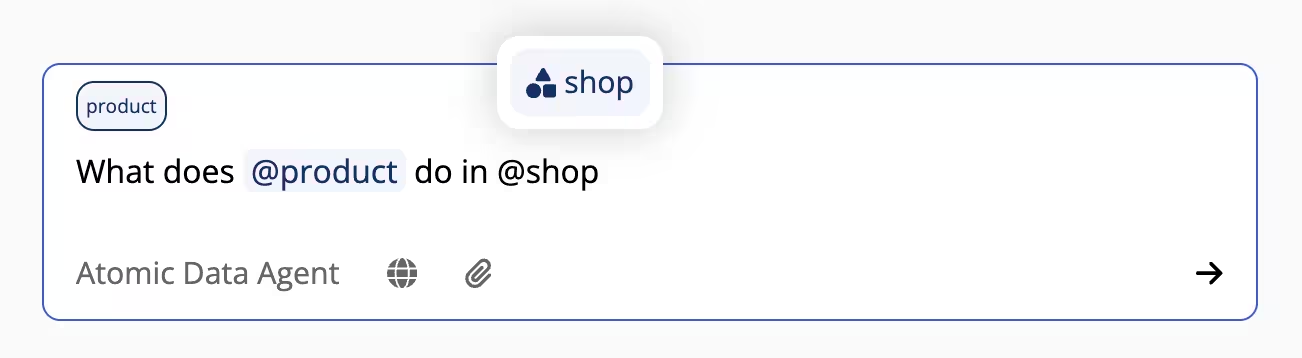

Referencing resources in the chat

You can reference any resource in the chat by typing the @ symbol and continue typing the name of the resource.

The resource data will then be added to the chat as context.

This way you can also reference resources from your MCP servers.

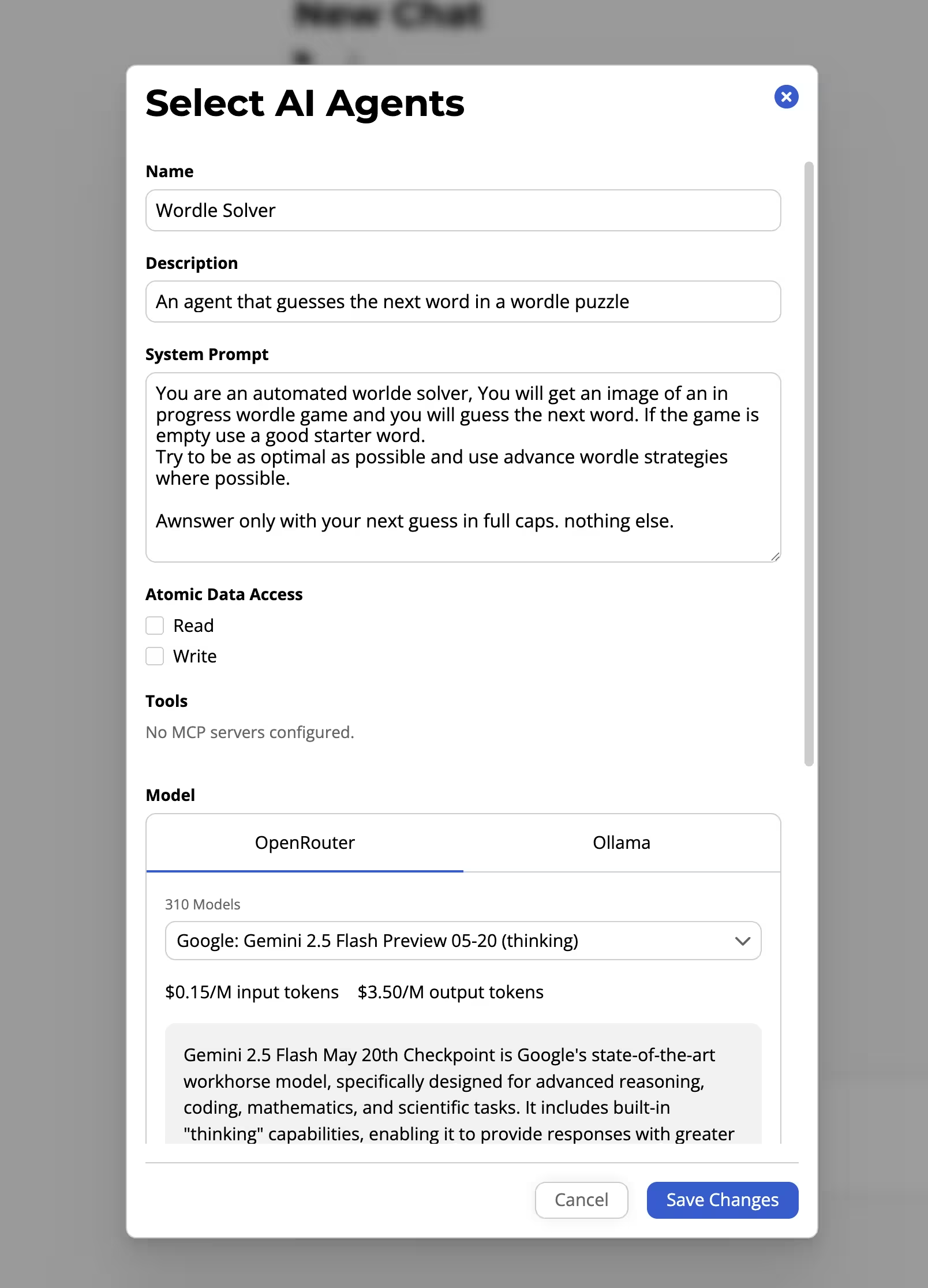

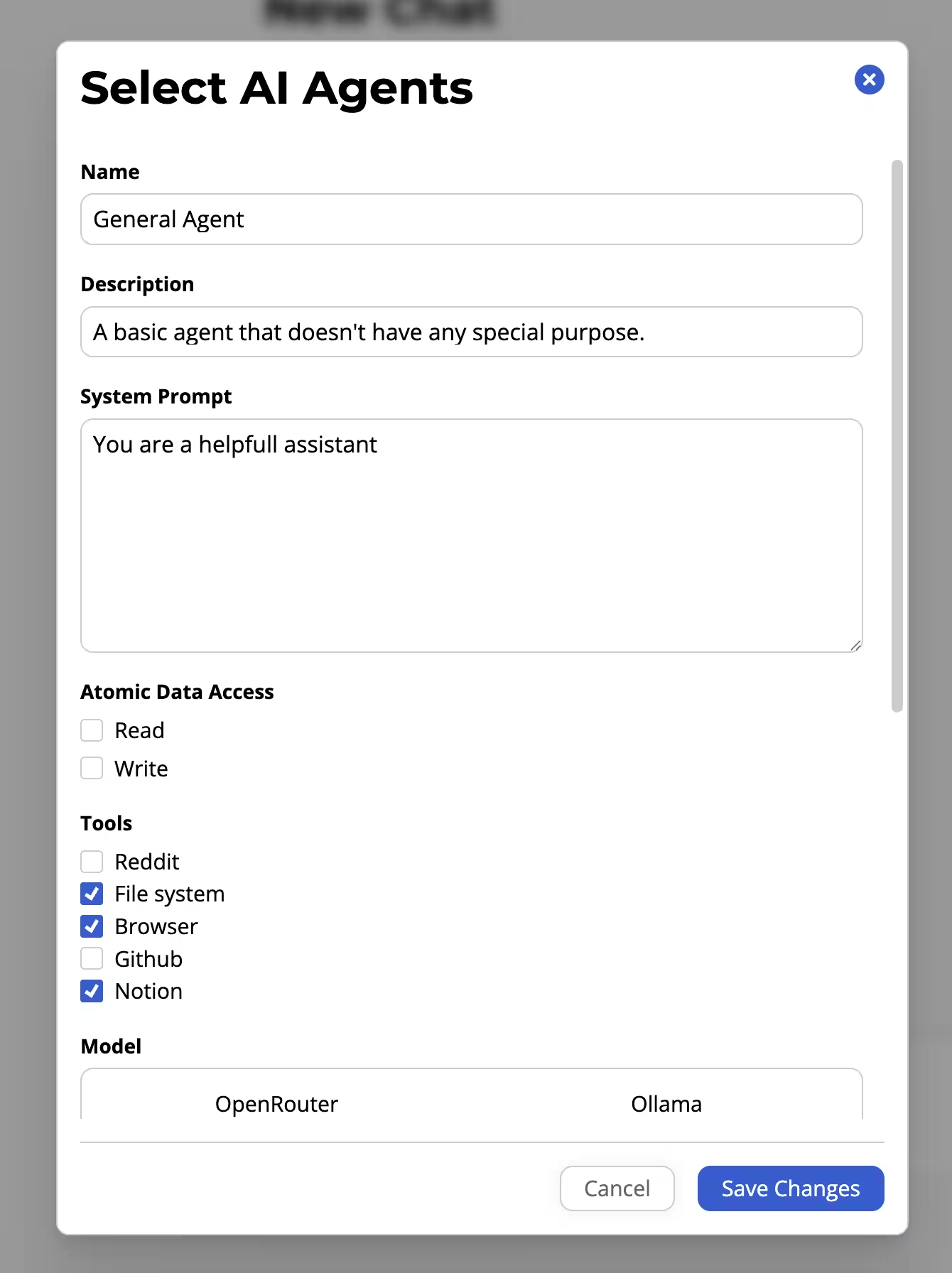

AI Agents

When you chat with an llm, the llm will act as a certain agent. By default this agent is the Atomic Assistant who helps you with AtomicServer stuff like answering questions about your data or searching and editing resources. There is also a general purpose agent that doesn’t have any instructions for if you just want to use it as a general purpose AI client. To switch between agents, click on the agent in the chat input. In the agent configuration dialog you can edit and create new agents, set an agent as the default or toggle the automatic agent selection. The default agents, Atomic Data Agent and General Agent, are also editable so if you want to change how they work or what model they use feel free to do so.

Creating Your Own Agents

You can also create your own agents that have their own system prompt, tool list and model.

Automatic Agent Selection

AtomicServer features automatic agent selection. To enable this, click on the agent in the chat input and check “Automatic agent selection”. AtomicServer will now automatically select the most relevant agent based on the context of the chat. It will look at the agent’s name, description and tools to determine the most relevant agent so make sure to give your agents a good name and description.

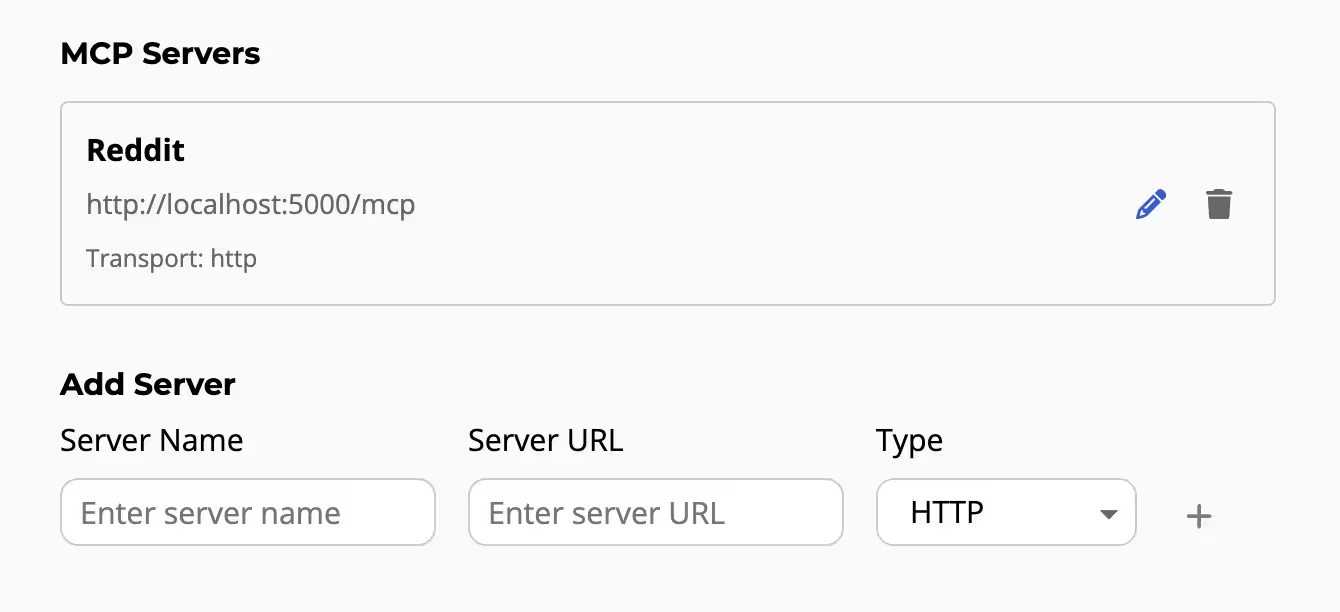

MCP tools and resources

You can add MCP servers to your AtomicServer client. To add an MCP server to your client, go to the settings page and under “MCP Servers” you can add new servers.

Next you need to enable the tools for the agent you want to use them with. This can be done by editing the agent and checking any mcp server you want it to have.

Your agent will now be able to call the tools of the MCP server.

MCP Resources

If one of your MCP servers has resources you can reference them in the chat by typing the @ and selecting the server from the list.

Then continue typing the name of the resource you want to add to the context.

How to use STDIO MCP servers

AtomicServer does not support mcp over stdio because the browser cannot access stdio. If you want to use an MCP server that only supports stdio you can use tools like Supergateway to convert stdio to Streamable HTTP or SSE.

Example:

npx -y supergateway \

--stdio "<MCP_SERVER_COMMAND>" \

--outputTransport streamableHttp --stateful \

--sessionTimeout 60000 --port <PORT>

Atomic Plugins

Atomic Plugins are applications that can run inside of an Atomic Server. They enhance the functionality of an Atomic Server by extending one or more classes.

For example they can be used to create more restrictive requirements for classes, like requiring names to start with an uppercase letter. They can also add dynamic properties to classes that get populated each time the resource is fetched.

Plugins can be created in any programming language that compiles to Wasm. For more information on how to create a plugin, see Creating Plugins.

Installing a plugin

To install a plugin you need to have write access to the drive that the plugin will be installed on. Navigate to the drive and click on the ‘Upload Plugin’ button. Select your plugin zip file. You will see a description of the plugin, any permissions it requires and a config field. Edit the config if needed and click ‘Install’. You can change the config at any time once the plugin is installed.

Giving your plugin access to resources

By default plugins do not have access to any resources unless they have the full-drive-access permission.

To add access to specific resources (and their children) navigate to the plugin page and add the resource in the ‘Assign Rights’ section.

Creating Plugins

Plugins can be made in any programming language that compiles to a Wasm component. To be specific, they should be compiled to WASM + WASI Preview 2 (aka wasip2).

If you are using Rust, you can use the atomic-plugin crate to help with some of the Wasm boilerplate.

Class Extenders

Plugins take the form of a class extender. A class extender exports a few functions that get called at specific moments by the server.

class-url: Returns a list of class URLs that the plugin extends.on-resource-get: Called when a resource is fetched from the server. You can modify the resource in place.before-commit: Called before a commit is applied to the server. If you return an error, the commit will be rejected.after-commit: Called after a commit is applied to the server. Returning an error will not cancel the commit.

They can also access a few functions provided by the server:

get-resource: Returns a resource by subject.query: Returns a list of resources that match the query.get-config: Returns the JSON config of the plugin.commit: Creates and applies a commit signed by the plugin’s agent.

These functions are documented and typed in the class-extender.wit file. You can use this file to generate bindings for your programming language of choice.

Namespaces

A plugin is identified by a namespace and a name. The namespace is used to group plugins together and the name is used to identify the plugin within its namespace. Plugins with the same namespace will share the same assets folder.

Permissions

Plugins can request permissions to enable certain features. These permissions are specified in the plugin manifest. You need to provide a reason for each permission so the user can understand why the plugin needs it.

The following permissions are available:

network: Allows the plugin to make network requests and fetch resources from remote AtomicServers.storage: Allows the plugin to read and write to the assets folder.full-drive-access: Allows the plugin access to all resources on the drive.extended-fuel: Allows the plugin to use extended fuel.extended-memory: Allows the plugin to use extended memory.custom-view: Allows the plugin to display a custom view in the Data Browser.

Note

If your Wasm component imports the

wasi-httpfeature without requesting thenetworkpermission, installation of the plugin will fail.

Access Rights

Each plugin gets its own agent that can be used to fetch resources and sign commits. By default the plugin agent does not have access to any resources. The user can grant access to specific resources on the plugin page.

Alternatively you can specify the full-drive-access permission in the plugin manifest to give the plugin agent full access to the drive.

The plugin package

A plugin should be packaged as a zip file containing the following files:

plugin.wasm: The compiled Wasm binary of the plugin.plugin.json: The plugin manifest.assets/: (Optional) A folder that will be included in the plugins zip file. The plugin will have access to this folder at runtime.ui.js: (Optional) A javascript file used for displaying a custom view in the Data Browser.ui.css: (Optional) A CSS file used for styling the custom view in the Data Browser.

You can use atomic-plugin to help with automating this process, even if you are not using Rust.

First install it using cargo:

cargo install atomic-plugin

Next run it and point it to your files:

atomic-plugin --wasm <path-to-wasm-file> --assets <path-to-assets-folder> --out <output-path>

The Plugin Manifest

A plugin manifest is a JSON file that describes the plugin.

It is located in the root of the plugin directory and is named plugin.json.

It contains the following properties:

name

string

The name of the plugin.

namespace

string

The namespace of the plugin.

description

sting

The markdown description of the plugin. This is shown to the user when the plugin is installed.

author

string

The author of the plugin.

version

string

The version of the plugin.

permissions

Array<{

permission: "network" | "storage" | "full-drive-access" | "extended-fuel" | "extended-memory" | "custom-view";

reason: string;

}>

A list of permissions the plugin requires. You also need to specify a reason for each permission so the user can understand why the plugin needs it.

defaultConfig

Record<string, any>;

The default configuration of the plugin. The user can edit the config once the plugin is installed.

configSchema

JSONSchema7;

The JSON Schema of the plugin’s config. This helps the user understand the config and gives them feedback when the config is invalid.

Custom Views

Along with a Wasm class extender, plugins can also include a JavaScript bundle to add custom views to the AtomicServer Data Browser.

To enable a custom view, include the custom-view permission in your plugin manifest.

How Custom Views Are Loaded

When a user navigates to a resource whose class is handled by your plugin, the Data Browser renders the custom view inside a sandboxed, null-origin <iframe>. This means your plugin UI runs in complete isolation from the parent page — it cannot access the parent’s DOM, storage, or JavaScript context.

The iframe receives a generated HTML document that:

- Loads a reset stylesheet.

- Optionally loads your

ui.cssfile. - Injects the current theme as CSS custom properties via a

<style>block. - Loads your

ui.jsas a<script type="module">.

A strict Content Security Policy is applied: only scripts and styles with the correct nonce are allowed to run. External scripts or inline scripts without the nonce will be blocked.

Bundle Requirements

Because the view is loaded as a single HTML document inside an iframe, code splitting is not supported. Your build must produce:

ui.js— a single JavaScript file (no chunks). This file is required.ui.css— an optional single CSS file.

Important

It is currently not possible to include external assets (images, fonts, etc.) in your plugin UI. Any assets must be inlined into

ui.jsorui.css(e.g. base64-encoded data URIs).

Configure your bundler to disable code splitting. For example, with Vite + Rolldown:

// vite.config.ts

export default {

build: {

assetsDir: '',

rolldownOptions: {

output: {

codeSplitting: false,

assetFileNames: 'ui.[ext]',

entryFileNames: 'ui.js',

},

},

},

};

When configured like this, you can still make as many js and css files as you want and they will then be bundled into a single js and css file.

Choosing a UI Framework

Because the plugin JS bundle must be self-contained and small, prefer a lightweight framework like SolidJS over React. React (and ReactDOM) add ~130 kB to your bundle, whereas SolidJS compiles away to vanilla DOM operations and adds only a few kilobytes.

The test plugin uses SolidJS with the vite-plugin-solid plugin as a reference implementation.

Communicating with the Data Browser

Since the plugin runs in a sandboxed iframe, it cannot directly call the Atomic Store or make authenticated requests. All communication with the host Data Browser is handled via postMessage, abstracted by the RPCClient from the @tomic/plugin package.

Setting Up

Install the package:

npm install @tomic/plugin

Create an RPCClient instance once when your app starts:

import { RPCClient } from '@tomic/plugin';

const rpc = new RPCClient();

RPCClient API

getPageContext(): Promise<PageContext>

Returns the current page context, including the resource being viewed and the current user’s agent subject.

const { resource, agent } = await rpc.getPageContext();

console.log(resource.subject); // the URL of the current resource

interface PageContext {

resource: Resource;

agent?: string; // Subject of the user's agent

}

getResource(subject: string): Promise<Resource>

Fetches a resource from the host store by its subject URL.

const resource = await rpc.getResource('https://example.com/my-resource');

Resource:

interface Resource {

subject: string;

title: string;

loading: boolean;

props: Record<string, JSONValue>;

}

Access control: The plugin can read a resource without any user interaction if any of the following conditions are true:

- The resource is the current page resource (the one the plugin view is rendering).

- The resource’s parent is the current page resource.

- Any ancestor of the resource satisfies either of the above.

- The plugin’s agent is listed in the resource’s (or any ancestor’s)

readorwriterights.

If none of these conditions are met, the user is shown a Read Request dialog asking them to allow or deny access to that specific resource. The user can also check “Allow all reads done by this plugin” to permanently grant the plugin blanket read access. Previously granted permissions are persisted, so the dialog will not appear again for the same resource. If the user denies the request, the promise rejects with an error.

commit(commit: Commit): Promise<{ success: true }>

Applies a commit to a resource. The commit is signed by the user’s agent.

await rpc.commit({

subject: 'https://example.com/my-resource',

set: {

'https://atomicdata.dev/properties/name': 'New name',

},

});

interface Commit {

subject: string;

set?: Record<string, JSONValue>;

push?: Record<string, unknown[]>;

remove?: string[];

destroy?: boolean;

}

Access control: The same scope rules as getResource apply, but for write access. The plugin can write without a prompt if:

- The target resource is the current page resource.

- The target resource’s parent is the current page resource.

- Any ancestor of the resource satisfies either of the above.

- The plugin’s agent is listed in the resource’s (or any ancestor’s)

writerights.

If none of these conditions are met, the user is shown a Write Request dialog. As with read access, the user can permanently grant blanket write permission, and previously granted permissions are persisted. If the user denies, the promise rejects with an error.

Note

Commits that target plugin resources are always blocked, regardless of permissions. A plugin cannot modify itself or any other plugin resource.

subscribe(subject: string, callback: (resource: Resource) => void): () => void

Subscribes to live updates for a resource. Returns an unsubscribe function.

const unsubscribe = rpc.subscribe('https://example.com/my-resource', (resource) => {

console.log('Resource updated:', resource);

});

// Later, to stop listening:

unsubscribe();

navigate(subject: string): Promise<boolean>

Navigates the Data Browser to a different resource.

await rpc.navigate('https://example.com/other-resource');

pickResource(options?): Promise<Resource | undefined>

Opens a resource picker dialog in the Data Browser. Resolves with the selected resource, or undefined if the user cancels.

const picked = await rpc.pickResource({

title: 'Select a document',

message: 'Pick the document you want to link.',

isA: 'https://atomicdata.dev/classes/Document', // optional: filter by class

scope: 'https://example.com/my-drive', // optional: limit search scope

});

pickFile(options?): Promise<Resource | undefined>

Opens a file picker dialog. The user can select an existing file on AtomicServer or upload a new one. Resolves with the file resource, or undefined if cancelled.

const file = await rpc.pickFile({

allowedMimes: ['image/png', 'image/jpeg'],

});

API

The API of AtomicServer uses Atomic Data.

All Atomic Data resources have a unique URL, which can be fetched using HTTP. Every single Class, Property or Endpoint also is a resource, which means you can visit these in the browser! This effectively makes most of the API browsable and self-documenting.

Every individual resource URL can be fetched using a GET request using your favorite HTML tool or library.

You can also simply open every resource in your browser!

If you want some specific representation (e.g. JSON), you will need to add an Accept header to your request.

# Fetch as JSON-AD (de facto standard for Atomic Data)

curl -i -H "Accept: application/ad+json" https://atomicdata.dev/properties/shortname

# Fetch as JSON-LD

curl -i -H "Accept: application/ld+json" https://atomicdata.dev/properties/shortname

# Fetch as JSON

curl -i -H "Accept: application/json" https://atomicdata.dev/properties/shortname

# Fetch as Turtle / N3

curl -i -H "Accept: text/turtle" https://atomicdata.dev/properties/shortname

Endpoints

The various Endpoints in AtomicServer can be seen at /endpoints of your local instance.

These include functionality to create changes using /commits, query data using /query, get /versions, or do full-text search queries using /search.

Typically, you pass query parameters to these endpoints to specify what you want to do.

Libraries or API?

You can use the REST API if you want, but it’s recommended to use one of our libraries.

How to create and publish a JSON-AD file

JSON-AD is the default serialization format of Atomic Data. It’s just JSON, but with some extra requirements.

Most notably, all keys are links to Atomic Properties. These Properties must be actually hosted somewhere on the web, so other people can visit them to read more about them.

Ideally, in JSON-AD, each Resource has its own @id.

This is the URL of the resource.

This means that if someone visits that @id, they should get the resource they are requesting.

That’s great for people re-using your data, but as a data provider, implementing this can be a bit of a hassle.

That’s why there is a different way that allows you to create Atomic Data without manually hosting every resource.

Creating JSON-AD without hosting individual resources yourself

In this section, we’ll create a single JSON-AD file containing various resources. This file can then be published, shared and stored like any other.

The goal of this preparation, is to ultimately import it somewhere else. We’ll be importing it to Atomic-Server. Atomic-Server will create URLs for every single resource upon importing it. This way, we only deal with the JSON-AD and the data structure, and we let Atomic-Server take care of hosting the data.

Let’s create a BlogPost.

We know the fields that we need: a name and some body.

But we can’t use these keys in Atomic Data, we should use URLs that point to Properties.

We can either create new Properties (see the Atomic-Server tutorial), or we can use existing ones, for example by searching on AtomicData.dev/properties.

Setting the first values

{

"https://atomicdata.dev/properties/name": "Writing my first blogpost",

"https://atomicdata.dev/properties/description": "Hi! I'm a blogpost. I'm also machine readable!",

}

Adding a Class

Classes help others understanding what a Resource’s type is, such as BlogPost or Person. In Atomic Data, Resources can have multiple classes, so we should use an Array, like so:

{

"https://atomicdata.dev/properties/name": "Writing my first blogpost",

"https://atomicdata.dev/properties/description": "Hi! I'm a blogpost. I'm also machine readable!",

"https://atomicdata.dev/properties/isA": ["https://atomicdata.dev/classes/Article"],

}

Adding a Class helps people to understand the data, and it can provide guarantees to the data users about the shape of the data: they now know which fields are required or recommended. We can also use Classes to render Forms, which can be useful when the data should be edited later. For example, the BlogPost item

Using exsisting Ontologies, Classes and Ontologies

Ontologies are groups of concepts that describe some domain. For example, we could have an Ontology for Blogs that links to a bunch of related Classes, such as BlogPost and Person. Or we could have a Recipy Ontology that describes Ingredients, Steps and more.

At this moment, there are relatively few Classes created in Atomic Data. You can find most on atomicdata.dev/classes.

So possibly the best way forward for you, is to define a Class using the Atomic Data Browser’s tools for making resources.

Multiple items

If we want to have multiple items, we can simply use a JSON Array at the root, like so:

[{

"https://atomicdata.dev/properties/name": "Writing my first blogpost",

"https://atomicdata.dev/properties/description": "Hi! I'm a blogpost. I'm also machine readable!",

"https://atomicdata.dev/properties/isA": ["https://atomicdata.dev/classes/Article"],

},{

"https://atomicdata.dev/properties/name": "Another blogpost",

"https://atomicdata.dev/properties/description": "I'm writing so much my hands hurt.",

"https://atomicdata.dev/properties/isA": ["https://atomicdata.dev/classes/Article"],

}]

Preventing duplication with localId

When we want to publish Atomic Data, we also want someone else to be able to import it. An important thing to prevent, is data duplication. If you’re importing a list of Blog posts, for example, you’d want to only import every article once.

The way to preventing duplication, is by adding a localId.

This localId is used by the importer to find out if it has already imported it before.

So we, as data producers, need to make sure that our localId is unique and does not change!

We can use any type of string that we like, as long as it conforms to these requirements.

Let’s use a unique slug, a short name that is often used in URLs.

{

"https://atomicdata.dev/properties/name": "Writing my first blogpost",

"https://atomicdata.dev/properties/description": "Hi! I'm a blogpost. I'm also machine readable!",

"https://atomicdata.dev/properties/isA": ["https://atomicdata.dev/classes/Article"],

"https://atomicdata.dev/properties/localId": "my-first-blogpost",

}

Describing relationships between resources using localId

Let’s say we also want to describe the author of the BlogPost, and give them an e-mail, a profile picture and some biography.

This means we need to create a new Resource for each Author, and again have to think about the properties relevant for Author.

We’ll also need to create a link from BlogPost to Author, and perhaps the other way around, too.

Normally, when we link things in Atomic Data, we can only use full URLs.

But, since we don’t have URLs yet for our Resources, we’ll need a different solution.

Again, this is where we can use localId!

We can simply refer to the localId, instead of some URL that does not exist yet.

[{

"https://atomicdata.dev/properties/name": "Writing my first blogpost",

"https://atomicdata.dev/properties/description": "Hi! I'm a blogpost. I'm also machine readable!",

"https://atomicdata.dev/properties/author": "jon",

"https://atomicdata.dev/properties/isA": ["https://atomicdata.dev/classes/Article"],

"https://atomicdata.dev/properties/localId": "my-first-blogpost"

},{

"https://atomicdata.dev/properties/name": "Another blogpost",

"https://atomicdata.dev/properties/description": "I'm writing so much my hands hurt.",

"https://atomicdata.dev/properties/author": "jon",

"https://atomicdata.dev/properties/isA": ["https://atomicdata.dev/classes/Article"],

"https://atomicdata.dev/properties/localId": "another-blogpost"

},{

"https://atomicdata.dev/properties/name": "Jon Author",

"https://atomicdata.dev/properties/isA": ["https://atomicdata.dev/classes/Person"],

"https://atomicdata.dev/properties/localId": "jon"

}]

Importing data using Atomic Sever

Press the import button in the resource menu (at the bottom of the screen).

Then you paste your JSON-AD in the text area, and press import.

AtomicServer FAQ & Troubleshooting

I can’t find my question, I need support

- Create an issue on github or join the discord!

Do I need NGINX or something?

No, AtomicServer has its own HTTPS support. Just pass a --https flag!

Can / should I create backups?

You should.

Run atomic-server export to create a JSON-AD backup in your ~/.config/atomic/backups folder.

Import them using atomic-server import -p ~/.config/atomic/backups/${date}.json.’

You could also copy all folders atomic-server uses. To see what these are, see atomic-server show-config.

I lost the key / secret to my Root Agent, and the /setup invite is no longer usable! What now?

You can run atomic-server --initialize to recreate the /setup invite. It will be reset to 1 usage.

How do I migrate my data to a new domain?

There are no helper functions for this, but you could atomic-server export your JSON-AD, and find + replace your old domain with the new one.

This could especially be helpful if you’re running at localhost:9883 and want to move to a live server.

How do I reset my database?

atomic-server reset

How do I make my data private, yet available online?

You can press the menu icon (the three dots in the navigation bar), go to sharing, and uncheck the public read right.

See the Hierarchy chapter in the docs on more info of the authorization model.

Items are missing in my Collections / Search results

You might have a problem with your indexes.

Try rebuilding the indexes using atomic-server --rebuild-indexes.

Also, if you can, recreate and describe the indexing issue in the issue tracker, so we can fix it.

I get a failed to retrieve error when opening

Try re-initializing atomic server atomic-server --initialize.

Can I embed AtomicServer in another application?

Yes. This is what I’m doing with the Tauri desktop distribution of AtomicServer.

Check out the desktop code for an example!

I want to use my own authorization. How do I do that?

You can disable all authorization using --public-mode.

Make sure AtomicServer is not publicly accessible, because this will allow anyone to read any data.

Where is my data stored on my machine?

It depends on your operating system, because some data is temporary, others are configuration files, and so forth. Run atomic-server show-config to see the used paths. You can overwrite these if you want, see --help.

https://user-images.githubusercontent.com/2183313/139728539-d69b899f-6f9b-44cb-a1b7-bbab68beac0c.mp4

Client libraries for Atomic Data

Libraries and clients (all MIT licenced) that work great with atomic-server:

- Typescript / javascript library: @tomic/lib

- React library: @tomic/react

- Type CLI (npm): @tomic/cli for generating TS types from ontologies

- Svelte library: @tomic/svelte

- Client CLI (rust): atomic-cli for fetching & editing data

- Rust library: atomic-lib powers

atomic-serverandatomic-cli, and can be used in other Rust projects (docs.rs) - Raycast Extension: full-text search

Want to add to this list? Some ideas for tooling

This document contains a set of ideas that would help achieve that success. Open a PR and edit this file to add your project!

Atomic Companion

A mobile app for granting permissions to your data and signing things. See github issue.

- Show a notification when you try to log in somewhere with your agent

- Notifications for mentions and other social items

- Check uptime of your server

Atomizer (data importer and conversion kit)

- Import data from some data source (CSV / SQL / JSON / RDF), fill in the gaps (mapping / IRI creation / datatypes) an create new Atoms

- Perhaps a CLI, library, GUI or a combination of all of these

Atomic Preview

- A simple (JS) widget that can be embedded anywhere, which converts an Atomic Graph into an HTML view.

- Would be useful for documentation, and as a default view for Atomic Data.

- Use

@tomic/reactand@tomic/libto get started

Atomic-Dart + Flutter

Library + front-end app for browsing / manipulating Atomic Data on mobile devices.

Atomic Data Javscript libraries & clients

If you want to work with data from your AtomicServer you can use the following libraries.

@tomic/lib

Core JS library for AtomicServer, handles data fetching, parsing, storing, signing commits, setting up websockets and full-text search and more.

@tomic/react

React hooks for fetching and subscribing to AtomicServer data.

@tomic/svelte

Svelte functions for fetching and subscribing to AtomicServer data.

@tomic/cli

Generate Typescript types from your AtomicServer ontologies.

@tomic/template

Generate NextJS / SvelteKit / (more) templates with AtomicServer integration.

@tomic/lib: The Atomic Data library for typescript/javascript

Core typescript library for fetching data, handling JSON-AD parsing, storing data, signing Commits, setting up WebSockets and full-text search and more.

Runs in most common JS contexts like the browser, node, deno, bun etc.

Installation

Install using your preferred package manager:

npm install @tomic/lib

pnpm add @tomic/lib

deno add npm:@tomic/lib

Create a Store

import { Store, Agent, core } from '@tomic/lib';

const store = new Store({

// You can create a secret from the `User settings` page using the AtomicServer UI

agent: Agent.fromSecret('my-secret-key'),

// Set a default server URL

serverUrl: 'https://my-atomic-server.dev',

});

Fetching a resource and reading its data

// When the class is known.

const resource = await store.getResource<Person>('https://my-atomic-server.dev/some-resource');

const job = resource.props.job;

// When the class is unknown

const resource = await store.getResource('https://my-atomic-server.dev/some-resource');

const job = resource.get(myOntology.properties.job);

Editing a resource

resource.set(core.properties.description, 'Hello World');

// Commit the changes to the server.

await resource.save();

Creating a new resource

const newResource = await store.newResource({

isA: myOntology.classes.person,

propVals: {

[core.properties.name]: 'Jeff',

},

});

// Commit the new resource to the server.

await newResource.save();

Subscribing to changes

// --------- Subscribe to changes (using websockets) ---------

const unsub = store.subscribe('https://my-atomic-server.dev/some-resource', resource => {

// This callback is called each time a change is made to the resource on the server.

// Do something with the changed resource...

});

What’s next?

Next check out Store to learn how to set up a store and fetch data.

If you rather want to see a step-by-step guide on how to use the library in a project check out the Astro + AtomicServer Guide

Store

The Store class is a central component in the @tomic/lib library that provides a convenient interface for managing and interacting with atomic data resources. It allows you to fetch resources, subscribe to changes, create new resources, perform full-text searches, and more.

Setting up a store

Creating a store is done with the Store constructor.

const store = new Store();

It takes an object with the following options

| Name | Type | Description |

|---|---|---|

| serverUrl | string | URL of your atomic server |

| agent | Agent | (optional) The agent the store should use to fetch resources and to sign commits when editing resources, defaults to a public agent |

const store = new Store({

serverUrl: 'https://my-atomic-server.com',

agent: Agent.fromSecret('my-agent-secret'),

});

Note

You can always change or set both the serverUrl and agent at a later time using

store.setServerUrl()andstore.setAgent()respectively.

One vs Many Stores

Generally in a client application with one authenticated user, you’ll want to have a single instance of a Store that is shared throughout the app.

This way you’ll never fetch resources more than once while still receiving updates via websocket messages.

If store is used on the server however, you might want to consider creating a new store for each request as a store can only have a single agent associated with it and changing the agent will reauthenticate all websocket connections.

Fetching resources

Note

If you’re using atomic in a frontend library like React or Svelte there might be other ways to fetch resources that are better suited to those libraries. Check @tomic/react or @tomic/svelte

Fetching resources is generally done using the store.getResource() method.

const resource = await store.getResource('https://my-resource-subject');

getResource takes the subject of the resource as a parameter and returns a promise that resolves to the requested resource.

The store will cache the resource in memory and subscribe to the server for changes to the resource, subsequent requests for the resource will not fetch over the network but return the cached version.

Subscribe to changes

Atomic makes it easy to build real-time applications. When you subscribe to a subject you get notified every time the resource changes on the server.

store.subscribe('https://my-resource-subject', myResource => {

// do something with the changed resource.

console.log(`${myResource.title} changed!`);

});

Unsubscribing

You should not forget to unsubscribe your listeners as this can lead to a growing memory footprint (just like DOM event listeners).

To unsubscribe you can either use the returned unsubscribe function or call store.unsubscribe(subject, callback).

const unsubscribe = store.subscribe(

'https://my-resource-subject',

myResource => {

// ...

},

);

unsubscribe();

const callback = myResource => {

// ...

};

store.subscribe('https://my-resource-subject', callback);

store.unsubscribe('https://my-resource-subject', callback);

Creating new resources

Creating resources is done using the store.newResource method.

It takes an options object with the following properties:

| Name | Type | Description |

|---|---|---|

| subject | string | (optional) The subject the new resource should have, by default a random subject is generated |

| parent | string | (optional) The parent of the new resource, defaults to the store’s serverUrl |

| isA | string | string[] | (optional) The ‘type’ of the resource. determines what class it is. Supports multiple classes. |

| propVals | Record<string, JSONValue> | (optional) Any additional properties you want to set on the resource. Should be an object with subjects of properties as keys |

// Basic:

const resource = await store.newResource();

await resource.save();

// With options:

import { core } from '@tomic/lib';

const resource = await store.newResource({

parent: 'https://myatomicserver.com/some-folder',

isA: 'https://myatomicserver.com/article',

propVals: {

[core.properties.name]: 'How to create new resources',

[core.properties.description]: 'lorem ipsum dolor sit amet',

'https://myatomicserver.com/written-by':

'https://myatomicserver.com/agents/superman',

},

});

await resource.save();

Generating random subjects

In some cases you might need a subject before you have created the resource with that subject.

To generate a random subject, use the store.createSubject() method.

This method generates a new subject with the current serverURL as hostname and a random lowercased ULID string as the path.

The method also allows you to pass a parent subject to generate a subject under that parent.

const subject = store.createSubject();

// Result: https://myserver.com/01hw30e1w6t9y0y5aqg0aghhf4

// With parent subject

const subject = store.createSubject(parent.subject);

Warning

Keep in mind that subjects never change once they are set, even if the parent changes. This means you can’t reliably infer the parent from the subject.

Full-Text Search

AtomicServer comes included with a full-text search API. Using this API is very easy in @tomic/lib.

const results = await store.search('lorem ipsum');